In today’s digital landscape, organizations rely heavily on applications and services that must remain accessible at all times. Whether it is an internal system used by employees or a customer-facing platform, downtime can lead to lost productivity, financial impact, and damaged reputation. Virtualization has transformed how infrastructure is managed, but it has also increased expectations for uptime and resilience. Instead of relying on single physical servers, workloads are now distributed across clusters, allowing for more flexible recovery options when failures occur.

Availability is not just about preventing outages. It is about minimizing disruption when failures inevitably happen. Hardware components can fail, networks can experience interruptions, and software can crash unexpectedly. The goal of any well-designed virtual environment is to reduce the impact of these failures and ensure that critical workloads continue to operate with minimal interruption.

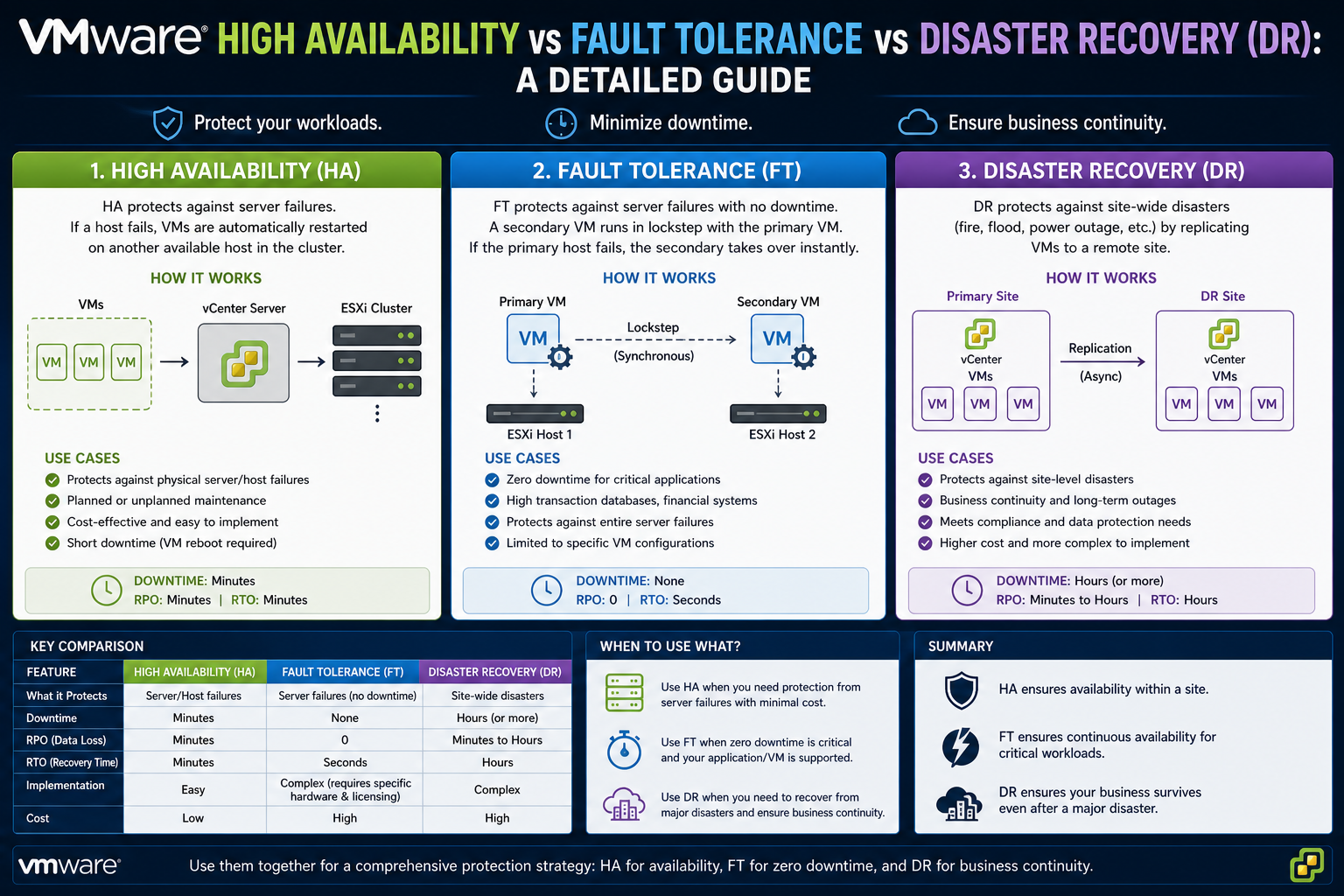

VMware provides multiple technologies designed to achieve different levels of availability. These include High Availability, Fault Tolerance, and Disaster Recovery solutions. Each of these plays a specific role in maintaining uptime and protecting workloads. Understanding how they differ and when to use each one is essential for building a resilient infrastructure.

The Concept of High Availability in Virtualization

High Availability is often the first layer of protection implemented in a VMware environment. It is designed to handle host-level failures by automatically restarting virtual machines on other available hosts within the same cluster. This ensures that workloads do not remain offline for extended periods when hardware issues occur.

The concept behind High Availability is relatively straightforward. Instead of trying to prevent failures entirely, it focuses on rapid recovery. When a host fails, the system detects the failure and initiates a restart of affected virtual machines on healthy hosts. This process reduces downtime compared to manual recovery, but it does not eliminate it completely.

High Availability is considered a reactive solution. It responds after a failure has occurred. This means that there is always a short interruption while the virtual machines are restarted and their operating systems boot up again. For many applications, this level of downtime is acceptable, especially when compared to the alternative of prolonged outages.

One of the reasons High Availability is widely adopted is its simplicity. It does not require complex configuration or specialized hardware. As long as multiple hosts are available in a cluster and shared storage is configured, High Availability can be enabled with minimal effort.

Cluster-Based Infrastructure and Its Importance

At the core of VMware availability solutions is the concept of clustering. A cluster is a group of ESXi hosts that share resources and are managed collectively. Clustering allows workloads to move between hosts and ensures that resources are pooled together for better utilization and resilience.

In a clustered environment, virtual machines are not tied to a single physical server. Instead, they exist as files stored on shared storage that can be accessed by multiple hosts. This shared access is what enables features like High Availability to function effectively.

When a host fails, other hosts in the cluster can access the same virtual machine files and restart them. Without shared storage, this would not be possible. Each host would have isolated storage, and recovery would require manual intervention and data restoration.

Clusters also provide flexibility in resource allocation. Administrators can balance workloads across hosts, ensuring that no single host becomes a bottleneck. This improves overall performance and makes it easier to handle failures when they occur.

The size and design of a cluster play a significant role in availability. Larger clusters provide more redundancy and greater capacity for failover. However, they also require careful planning to ensure that resources are used efficiently.

How High Availability Detects Failures

High Availability relies on multiple mechanisms to detect when a host has failed. The primary method is through network heartbeats exchanged between hosts in the cluster. These heartbeats act as signals that confirm each host is operational and connected.

If a host stops sending heartbeats, the cluster assumes that it may have failed. However, network issues can sometimes cause temporary communication loss. To avoid false positives, VMware uses additional checks such as datastore heartbeats.

Datastore heartbeats involve monitoring shared storage to determine whether a host is still active. If a host can still access the datastore, it may indicate that the issue is network-related rather than a complete failure. This helps the system make more accurate decisions about when to trigger recovery actions.

Once a failure is confirmed, High Availability initiates the restart process for affected virtual machines. This process is automated and does not require administrator intervention.

The detection and response time can vary depending on configuration settings. Administrators can adjust sensitivity levels to balance between fast recovery and avoiding unnecessary failovers.

Virtual Machine Restart Process Explained

When a host failure is detected, the virtual machines that were running on that host are marked for restart. The cluster identifies available hosts with sufficient resources and begins powering on the virtual machines.

This process involves several steps. First, the virtual machine files are accessed from shared storage. Then, the virtual machine is registered on a new host. After that, the system initiates the boot process for the guest operating system.

The total recovery time depends on multiple factors. These include the size of the virtual machine, the performance of the underlying storage, and the complexity of the operating system and applications.

Applications that require significant initialization time may take longer to become fully operational. This is an important consideration when designing availability strategies.

Despite these delays, High Availability significantly reduces downtime compared to manual recovery methods. Instead of waiting for an administrator to respond, the system automatically restores workloads as quickly as possible.

Importance of Resource Management in HA

For High Availability to work effectively, there must be enough available resources in the cluster to handle failover scenarios. This includes CPU, memory, and storage capacity.

If all hosts in a cluster are heavily utilized, there may not be enough capacity to restart virtual machines after a failure. This can lead to incomplete recovery and extended downtime for some workloads.

To address this, VMware provides admission control policies. These policies reserve a portion of cluster resources specifically for failover. This ensures that sufficient capacity is always available to handle host failures.

Administrators can configure admission control in different ways. One approach is to reserve resources based on the number of host failures that the cluster should tolerate. Another approach is to reserve a percentage of total resources.

Proper resource management is critical for maintaining availability. Without it, even the best failover mechanisms may not function as intended.

Handling Host Isolation Scenarios

Not all failures involve complete host shutdown. In some cases, a host may lose network connectivity but remain powered on. This is known as host isolation.

Host isolation presents a unique challenge because the system must decide whether the virtual machines on that host are still accessible or need to be restarted elsewhere.

VMware allows administrators to define how the system should respond to isolation events. Options include leaving virtual machines running, shutting them down, or powering them off and restarting them on other hosts.

The correct choice depends on the environment. In some cases, leaving virtual machines running may be the best option if network connectivity is expected to be restored quickly. In other cases, restarting workloads elsewhere may provide faster recovery.

Careful configuration is required to avoid issues such as duplicate virtual machines or data inconsistencies.

Storage Failure Response in High Availability

Storage plays a critical role in virtualization, and failures at the storage level can have significant impact. High Availability includes mechanisms to detect and respond to storage issues.

One type of storage failure is Permanent Device Loss, where a datastore becomes completely unavailable. In this case, virtual machines may need to be restarted on hosts that still have access to the storage.

Another scenario is All Paths Down, where connectivity to storage is temporarily lost. Since this condition may be resolved automatically, VMware allows administrators to configure a delay before taking action.

This flexibility helps prevent unnecessary failovers caused by temporary storage issues.

By monitoring both host and storage health, High Availability provides comprehensive protection against a wide range of failure scenarios.

Role of Virtual Machine Monitoring

In addition to monitoring hosts and storage, VMware can also monitor the health of individual virtual machines. This feature uses heartbeats from VMware Tools to determine whether the guest operating system is functioning properly.

If a virtual machine becomes unresponsive, the system can automatically restart it. This helps recover from operating system crashes and application failures.

Virtual machine monitoring adds another layer of protection, ensuring that workloads remain operational even when issues occur داخل the guest operating system.

However, this feature must be configured carefully. Aggressive settings may lead to unnecessary restarts, especially for workloads that experience temporary performance issues.

Testing and tuning are essential to achieve the right balance between responsiveness and stability.

Why High Availability Is Widely Used

High Availability is one of the most widely adopted VMware features because it provides a strong balance between simplicity and effectiveness. It requires minimal configuration and does not demand specialized hardware.

For most organizations, HA delivers sufficient protection against common infrastructure failures. It ensures that workloads are automatically restarted and reduces the need for manual intervention.

Because it is included in standard vSphere environments, it is often the first step in building a resilient infrastructure.

High Availability serves as the foundation for more advanced availability strategies. While it does not provide zero downtime, it significantly improves overall system reliability.

Introduction to Continuous Availability Requirements

While High Availability focuses on restarting virtual machines after a failure, some workloads demand a higher level of protection where downtime is not acceptable at all. In certain environments, even a few seconds of interruption can lead to financial loss, data inconsistency, or service disruption. Financial systems, critical databases, and real-time applications often fall into this category.

To address these strict requirements, VMware provides a more advanced solution known as Fault Tolerance. Unlike restart-based recovery, Fault Tolerance is designed to eliminate downtime entirely by ensuring that a secondary virtual machine is always running in sync with the primary one. This creates a continuous availability model where failure does not result in service interruption.

Fault Tolerance represents a different philosophy compared to High Availability. Instead of reacting after a failure occurs, it proactively maintains an identical running copy of a virtual machine. This allows workloads to continue operating seamlessly even when underlying hardware fails unexpectedly.

Understanding VMware Fault Tolerance Architecture

Fault Tolerance works by creating a live shadow instance of a virtual machine on a separate host. This secondary virtual machine is an exact replica of the primary one and runs in lockstep with it. Every CPU instruction and memory change is continuously mirrored to ensure both instances remain identical at all times.

The synchronization process happens in real time. As the primary virtual machine executes operations, the secondary virtual machine receives the same execution stream. This ensures that both machines are always in the same state.

Unlike High Availability, which relies on restarting virtual machines after failure, Fault Tolerance ensures that there is no restart required. If the primary host fails, the secondary virtual machine immediately takes over execution without interruption.

This architecture eliminates downtime caused by host failure. From an application perspective, the transition is seamless and often unnoticeable.

Fault Tolerance requires careful coordination between hosts, networking, and storage to maintain consistent synchronization.

How Fault Tolerance Maintains Synchronization

The core mechanism behind Fault Tolerance is the continuous replication of execution state. Instead of replicating data at the storage level, it replicates at the compute level.

This means that CPU operations, memory updates, and system states are duplicated in real time. The primary virtual machine executes instructions while the secondary virtual machine receives and applies the same instructions simultaneously.

A specialized logging mechanism is used to transmit these execution changes between hosts. This ensures that both virtual machines remain perfectly aligned.

Because both instances are running simultaneously, there is no need to restart applications or operating systems during a failure event. The secondary virtual machine is already in a ready-to-run state.

This approach provides extremely high availability guarantees, but it also introduces performance and infrastructure overhead due to constant synchronization.

Failover Process in Fault Tolerance

When a failure occurs in a Fault Tolerant environment, the transition from primary to secondary is immediate. There is no reboot process, no application restart, and no session loss.

The secondary virtual machine instantly becomes the primary instance and continues execution from the exact point where the failure occurred.

This seamless failover is one of the key advantages of Fault Tolerance. Applications remain operational without interruption, making it suitable for workloads that cannot tolerate downtime.

After failover, VMware automatically creates a new secondary virtual machine on another host to restore redundancy. This ensures that protection remains active even after a failure event.

This continuous recovery cycle maintains high resilience throughout the environment.

Use Cases for Fault Tolerance

Fault Tolerance is designed for very specific workloads that require continuous uptime. It is not intended for general-purpose use across all virtual machines.

One of the most common use cases is financial trading systems, where even milliseconds of downtime can result in lost transactions or revenue. These systems require uninterrupted execution.

Another use case is critical database systems that support real-time transactions. Any interruption in database processing can lead to inconsistencies or service failures.

Legacy applications that do not support clustering can also benefit from Fault Tolerance. In cases where applications cannot be redesigned for distributed architecture, Fault Tolerance provides a way to achieve high availability without modifying the software.

It is also used in environments where compliance requirements demand continuous service availability.

However, due to its resource intensity, it is typically reserved for the most critical workloads only.

Hardware and Infrastructure Requirements

Fault Tolerance has strict infrastructure requirements compared to High Availability.

Hosts must support specific CPU technologies that enable fast synchronization between virtual machines. Modern processors are required to handle the continuous instruction replication process efficiently.

High-speed networking is essential. Since execution state is constantly transmitted between hosts, low latency and high bandwidth connections are required. Dedicated networking for Fault Tolerance traffic is often recommended to avoid congestion.

Storage systems must also be highly reliable and accessible by both primary and secondary hosts. Shared storage ensures that virtual machines can operate consistently across failover scenarios.

Because of these requirements, Fault Tolerance is usually deployed in carefully designed environments with optimized hardware configurations.

Performance Considerations in Fault Tolerance

One of the key trade-offs in Fault Tolerance is performance overhead. Since every operation is duplicated in real time, there is additional load on CPU, memory, and network resources.

This overhead can reduce overall system efficiency compared to standard virtual machines. As a result, Fault Tolerant virtual machines are often limited in number per host.

Resource planning becomes extremely important. Overcommitting resources can negatively impact both primary and secondary virtual machine performance.

Workloads that are CPU-intensive or highly dynamic may experience more overhead compared to stable workloads.

Because of these constraints, Fault Tolerance is not typically used for large-scale deployments. Instead, it is reserved for selective, mission-critical applications.

Limitations of Fault Tolerance

Although Fault Tolerance provides continuous availability, it has several limitations that restrict its widespread use.

It is limited in terms of supported virtual machine configurations. Not all workloads or virtual machine sizes are compatible with Fault Tolerance.

It consumes significantly more resources compared to High Availability. Running two synchronized virtual machines simultaneously increases infrastructure demand.

It does not protect against all types of failures. For example, it does not replace Disaster Recovery solutions for site-level outages or data center failures.

It also requires careful configuration and monitoring to ensure synchronization remains stable.

These limitations mean that Fault Tolerance must be used strategically rather than broadly.

Difference Between Fault Tolerance and High Availability

The key difference between Fault Tolerance and High Availability lies in how they handle failure.

High Availability reacts after a failure by restarting virtual machines on another host. This results in brief downtime.

Fault Tolerance prevents downtime entirely by maintaining a continuously running secondary virtual machine.

High Availability is suitable for most workloads due to its efficiency and simplicity. Fault Tolerance is designed for extreme cases where downtime is not acceptable.

High Availability is cost-effective and scalable. Fault Tolerance is resource-intensive and limited in scale.

Understanding this difference is critical when designing a VMware infrastructure.

Operational Use of Fault Tolerance

In real-world environments, Fault Tolerance is rarely used across all workloads. Instead, it is selectively applied to the most critical systems.

Administrators typically identify specific applications that require zero downtime and enable Fault Tolerance only for those workloads.

This selective approach ensures that infrastructure resources are used efficiently while still protecting essential services.

Regular monitoring is also required to ensure synchronization remains healthy between primary and secondary virtual machines.

Any disruption in replication must be addressed immediately to maintain protection.

Fault Tolerance as a Specialized Solution

Fault Tolerance represents a highly specialized availability solution within VMware environments. It is not intended to replace High Availability but to complement it.

While HA provides broad protection for most workloads, FT provides extreme protection for a small subset of critical systems.

Together, they form a layered availability strategy that balances cost, performance, and resilience.

Understanding the Need for Disaster Recovery in Virtual Environments

Even with strong cluster-level protection like High Availability and advanced continuous availability through Fault Tolerance, there are still scenarios that cannot be handled within a single data center. Large-scale failures such as complete site outages, natural disasters, cyber incidents, or major storage infrastructure collapse can bring down an entire environment at once. In these situations, local recovery mechanisms are not enough because the entire infrastructure may become unavailable.

This is where Disaster Recovery becomes essential. Disaster Recovery is not focused on single host or single cluster failures. Instead, it is designed to protect against catastrophic events that affect an entire site or geographical location. It ensures that critical workloads can be restored and resumed at a secondary location when the primary environment is no longer operational.

Unlike High Availability, which reacts quickly within a cluster, and Fault Tolerance, which maintains continuous execution, Disaster Recovery operates at a broader infrastructure level. It focuses on replication, failover planning, and controlled recovery processes that allow organizations to resume operations after major disruptions.

Disaster Recovery is a key component of business continuity planning. It defines how systems will be recovered, how data will be restored, and how services will be brought back online after a disaster event.

Site-Level Failures and Their Impact on Infrastructure

A site-level failure refers to a situation where an entire data center or physical location becomes unavailable. This can happen due to power outages, hardware failures affecting multiple systems, network backbone issues, environmental disasters, or security incidents.

In such cases, even if virtual machines are protected within a cluster, they become inaccessible because the entire site hosting them is down. High Availability cannot function because there are no operational hosts available within the cluster. Fault Tolerance also fails to provide protection because both primary and secondary instances typically rely on infrastructure within the same environment or closely connected systems.

This highlights a critical gap in local availability solutions. While they protect against hardware or host-level failures, they do not address complete site loss.

Disaster Recovery fills this gap by maintaining copies of virtual machines in a separate location. These copies can be activated when the primary site becomes unavailable, ensuring continuity of operations.

The effectiveness of Disaster Recovery depends heavily on planning, replication strategy, and infrastructure readiness at the secondary site.

Core Concept of VMware Disaster Recovery

At its core, Disaster Recovery in VMware environments is based on replication and controlled failover. Virtual machines running in a primary site are continuously or periodically copied to a secondary site. These copies are stored on infrastructure that can take over operations when needed.

The goal is to ensure that a recent version of workloads is always available at an alternate location. In the event of a disaster, these replicated workloads can be powered on and brought into production use.

Unlike Fault Tolerance, Disaster Recovery does not maintain a live execution state. Instead, it relies on data replication and recovery orchestration. This means there may be some data loss depending on the replication interval, but it provides protection against complete infrastructure loss.

Disaster Recovery introduces the concept of Recovery Point Objective and Recovery Time Objective. These define how much data loss is acceptable and how quickly systems should be restored after a failure.

This makes Disaster Recovery more strategic and planning-focused compared to the reactive nature of High Availability or the real-time nature of Fault Tolerance.

vSphere Replication as a Core DR Mechanism

One of the key technologies used in VMware Disaster Recovery is vSphere Replication. This tool is designed to replicate virtual machines from a primary site to a secondary site on a configurable schedule.

Replication can occur continuously or at defined intervals depending on business requirements. The frequency of replication directly affects how much data could be lost during a disaster event.

A shorter replication interval results in lower data loss but requires more network bandwidth and storage resources. A longer interval reduces infrastructure load but increases potential data loss.

vSphere Replication also supports point-in-time recovery. This allows administrators to restore virtual machines to a previous state before a failure or corruption event occurred. This is particularly useful in scenarios involving data corruption or malicious activity.

Replication is handled at the virtual machine level, which provides flexibility in selecting which workloads need protection. Not all virtual machines need to be replicated, allowing organizations to prioritize critical systems.

This granular approach makes vSphere Replication a flexible and widely used component of VMware Disaster Recovery strategies.

Recovery Point Objective and Recovery Time Objective

Disaster Recovery planning is heavily defined by two key metrics: Recovery Point Objective and Recovery Time Objective.

Recovery Point Objective refers to the maximum acceptable amount of data loss measured in time. For example, if replication occurs every 15 minutes, the Recovery Point Objective is 15 minutes. This means that in a disaster, up to 15 minutes of data may be lost.

Recovery Time Objective refers to the maximum acceptable time required to restore services after a disaster. This includes the time needed to power on virtual machines, reconfigure networking, and restore application functionality.

These two metrics guide how Disaster Recovery solutions are designed. Critical applications may require very low Recovery Point Objectives and Recovery Time Objectives, while less important systems may tolerate longer delays.

Balancing these objectives requires careful consideration of infrastructure capabilities, bandwidth availability, and business requirements.

Role of Site Recovery Manager in Disaster Recovery

While vSphere Replication handles data movement, VMware Site Recovery Manager provides orchestration for Disaster Recovery processes. It automates the failover and recovery of virtual machines at the secondary site.

Site Recovery Manager allows administrators to define recovery plans. These plans specify the order in which virtual machines should be powered on, how networking should be configured, and how dependencies between applications should be handled.

This orchestration is critical because many applications depend on multiple services. For example, a database must be started before application servers that rely on it. Without proper sequencing, applications may fail even after recovery.

Site Recovery Manager also enables non-disruptive testing. Organizations can simulate Disaster Recovery scenarios without impacting production systems. This allows validation of recovery plans and ensures readiness before an actual disaster occurs.

Testing is performed in isolated networks, ensuring that production traffic is not affected during simulation.

This capability provides confidence in recovery processes and helps organizations meet compliance and audit requirements.

Failover Process in Disaster Recovery

When a disaster occurs, a controlled failover process is initiated. This process involves activating replicated virtual machines at the secondary site.

Unlike High Availability, where recovery is automatic and immediate within a cluster, Disaster Recovery failover is often planned and executed manually or semi-automatically.

The process includes verifying replication status, selecting recovery points, powering on virtual machines, and configuring network settings.

Depending on the configuration, IP addresses may need to be changed to match the secondary site network. This ensures that applications remain accessible after recovery.

Once workloads are running at the secondary site, operations continue from there until the primary site is restored.

After recovery, a reverse replication process may be used to synchronize changes back to the original site.

Infrastructure Requirements for Disaster Recovery

Disaster Recovery requires infrastructure at both primary and secondary sites. Both locations must have compute resources, storage systems, and networking capabilities.

Bandwidth between sites is also critical because replication traffic must be transferred efficiently. Insufficient bandwidth can lead to replication delays and increased Recovery Point Objectives.

Storage compatibility between sites is important to ensure virtual machines can run without modification after failover.

Network design must support seamless transition between sites, including IP addressing schemes and routing configurations.

Because Disaster Recovery spans multiple locations, it is more complex to design and maintain compared to High Availability or Fault Tolerance.

Importance of Planning in Disaster Recovery

Disaster Recovery is not a feature that can be enabled and forgotten. It requires continuous planning, testing, and validation.

Organizations must regularly review recovery plans to ensure they match current infrastructure and application requirements.

Changes in workloads, network design, or storage systems can impact recovery effectiveness.

Testing is essential to confirm that failover processes work as expected.

Without proper planning, Disaster Recovery solutions may fail during real emergencies despite being technically configured.

This makes governance and operational discipline critical components of Disaster Recovery strategy.

Disaster Recovery as a Strategic Layer

Disaster Recovery represents the highest level of protection in VMware availability architecture. It extends beyond local infrastructure and ensures business continuity even in extreme scenarios.

While High Availability protects against host failures and Fault Tolerance protects against real-time disruption, Disaster Recovery protects against complete site loss.

It is slower compared to other solutions but provides the broadest level of coverage.

Disaster Recovery is not a replacement for HA or FT but a complementary layer that completes the overall availability strategy.

Comparing the Core Purpose of HA, FT, and DR

When designing a resilient VMware environment, it is important to understand that High Availability, Fault Tolerance, and Disaster Recovery are not competing solutions. Instead, they operate at different layers of infrastructure protection and address different types of failure scenarios.

High Availability is focused on recovering from host-level failures within a cluster. It ensures that virtual machines are automatically restarted on healthy hosts when a physical server fails. Its primary goal is to reduce downtime after an unexpected hardware or host issue.

Fault Tolerance operates at a more advanced level. It ensures continuous availability by running a secondary virtual machine in real time alongside the primary one. If the primary instance fails, the secondary instantly takes over without interruption.

Disaster Recovery operates at the widest scope. It protects against complete site or data center failure by replicating workloads to an alternate location. It ensures that services can be restored even when the entire primary infrastructure is unavailable.

These three technologies together form a layered approach to availability, where each layer protects against a different category of failure.

Differences in Downtime and Recovery Behavior

One of the most important differences between these technologies is how they handle downtime.

High Availability involves a short period of downtime. When a host fails, virtual machines must be restarted on another host. This causes a brief interruption while systems boot and applications initialize.

Fault Tolerance eliminates downtime entirely. Because a secondary virtual machine is already running in sync, there is no need for reboot or recovery time. Failover happens instantly and transparently.

Disaster Recovery involves the longest recovery time. Since workloads must be replicated to a different site and then powered on, there is a controlled recovery process. This can take minutes or longer depending on configuration, but it protects against large-scale failures.

The difference in downtime reflects the scope of protection each solution provides. Faster recovery usually comes with higher resource usage and complexity.

Infrastructure Scope and Deployment Differences

High Availability operates within a single cluster. It depends on multiple ESXi hosts sharing storage and resources within the same environment. Its scope is limited to local infrastructure.

Fault Tolerance also operates within a cluster but requires tighter synchronization between hosts. It demands low latency networking and compatible hardware to support continuous replication of execution state.

Disaster Recovery extends beyond a single cluster or data center. It requires a secondary site with its own compute, storage, and networking infrastructure. It also requires replication mechanisms to keep workloads synchronized between locations.

This difference in scope makes Disaster Recovery the most complex to implement, while High Availability is the simplest.

Fault Tolerance sits in the middle, requiring more specialized infrastructure than HA but less geographic complexity than DR.

Resource Consumption and Performance Impact

Resource usage varies significantly across these technologies.

High Availability has relatively low overhead. It does not consume additional resources during normal operation because virtual machines run normally until a failure occurs.

Fault Tolerance has high resource consumption. It runs duplicate virtual machines simultaneously and continuously synchronizes execution state. This increases CPU, memory, and network usage significantly.

Disaster Recovery also consumes resources, but in a different way. It requires storage and network capacity for replication, as well as compute resources at the secondary site for failover readiness.

The cost of each solution increases with the level of protection it provides. Higher availability often requires more infrastructure investment.

Use Case Suitability for Each Technology

Each technology is suited for different types of workloads.

High Availability is suitable for general production workloads. Web servers, application servers, and internal business systems often use HA because it provides strong protection with minimal complexity.

Fault Tolerance is suitable for mission-critical applications that require zero downtime. Financial systems, real-time processing applications, and certain legacy systems benefit from FT due to its continuous execution model.

Disaster Recovery is suitable for enterprise-wide protection. It is used for protecting entire environments against disasters such as data center outages, regional failures, or large-scale infrastructure loss.

In real-world environments, organizations often combine all three technologies to match different workload requirements.

Complexity and Operational Management

High Availability is relatively easy to configure and manage. It requires cluster setup, shared storage, and basic configuration settings. Once enabled, it operates automatically with minimal intervention.

Fault Tolerance is more complex. It requires compatible hardware, strict networking requirements, and careful resource planning. It also needs continuous monitoring to ensure synchronization remains healthy.

Disaster Recovery is the most complex from an operational perspective. It involves replication setup, secondary site management, recovery planning, and regular testing. It also requires coordination between infrastructure, networking, and application teams.

Operational complexity increases with the level of protection provided, making planning and governance critical.

Business Impact and Risk Coverage

From a business perspective, each solution addresses different levels of risk.

High Availability reduces risk from common hardware failures and minimizes downtime for standard operations. It is the baseline protection for most environments.

Fault Tolerance reduces risk to near zero for selected workloads. It ensures continuous service availability, which is essential for critical systems where even brief downtime is unacceptable.

Disaster Recovery reduces risk from catastrophic events. It ensures business continuity even when entire sites fail.

Together, they provide layered protection that aligns technical resilience with business continuity requirements.

How These Technologies Work Together

In a well-designed VMware environment, High Availability, Fault Tolerance, and Disaster Recovery are not used independently but as complementary layers.

High Availability acts as the first line of defense, handling most routine failures automatically.

Fault Tolerance is applied selectively to critical workloads that cannot tolerate interruption.

Disaster Recovery provides the final layer of protection for large-scale failures beyond the local environment.

This layered approach ensures that different types of risks are addressed appropriately without overloading infrastructure or increasing unnecessary complexity for all workloads.

By combining these technologies, organizations can build a flexible and resilient architecture that balances performance, cost, and availability.

Final Conclusion

High Availability, Fault Tolerance, and Disaster Recovery each serve a distinct purpose in VMware environments.

High Availability focuses on fast recovery after host failures and provides a practical, widely used solution for most workloads.

Fault Tolerance ensures continuous availability by eliminating downtime entirely for critical applications, but requires higher resources and careful configuration.

Disaster Recovery protects against large-scale infrastructure or site failures and ensures business continuity across locations through replication and orchestrated recovery.

Understanding the differences between these technologies is essential for designing a reliable virtualization strategy. Instead of choosing only one, most modern environments use all three in combination to achieve layered protection, ensuring systems remain available under a wide range of failure scenarios.