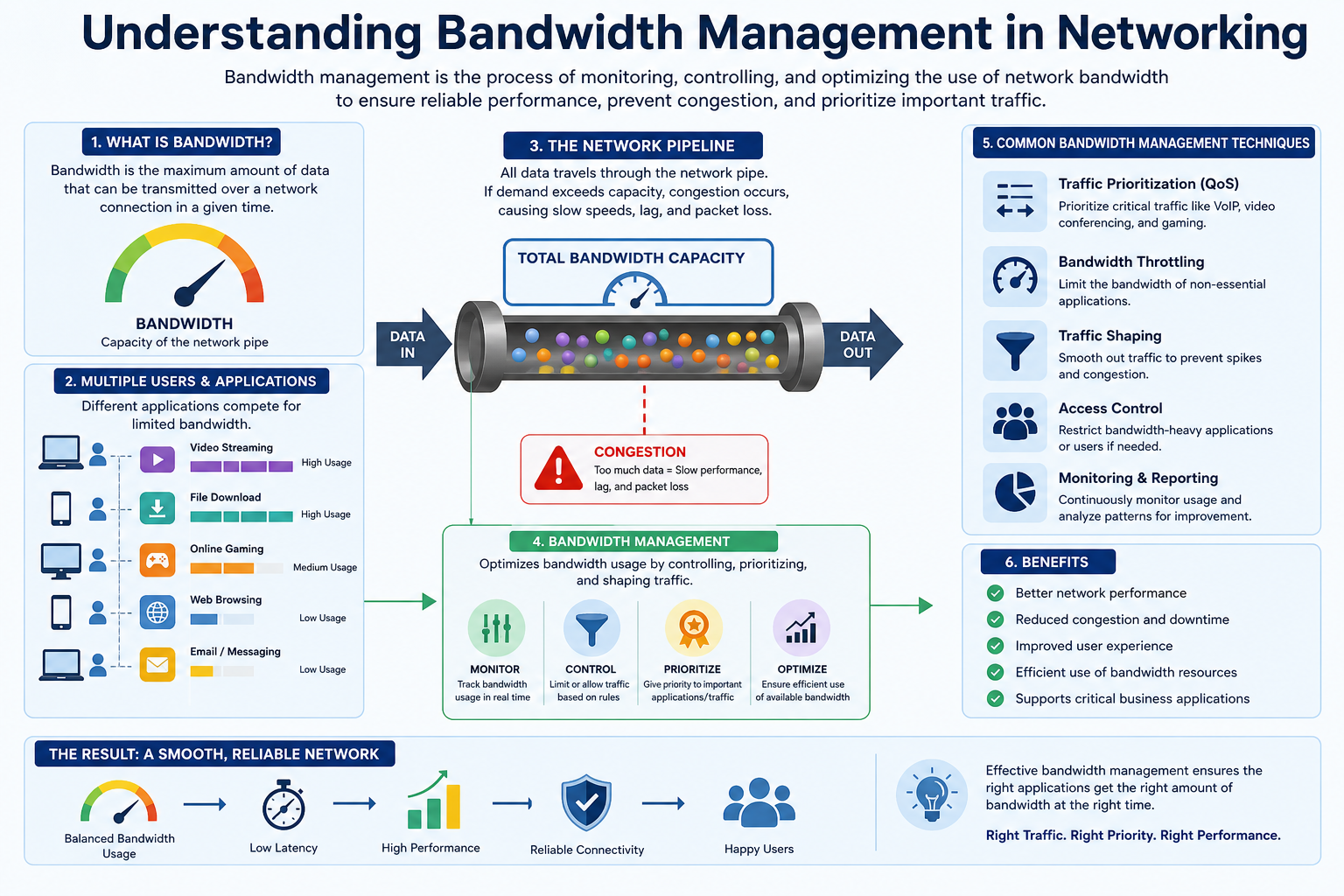

Bandwidth management refers to the structured process of controlling, organizing, and optimizing how data moves across a computer network. In any environment where multiple users, applications, and devices compete for limited network resources, bandwidth management plays a critical role in ensuring smooth, stable, and efficient communication. It is not just about limiting usage but about intelligently distributing available capacity so that essential services always perform reliably while less important traffic does not interfere with overall performance.

In modern digital systems, organizations depend heavily on continuous data flow. Whether it is emails, cloud applications, video conferencing, file transfers, or online services, everything relies on network bandwidth. Without proper management, networks can become congested, slow, and unreliable. Bandwidth management solves this challenge by prioritizing traffic, balancing loads, and ensuring that the most important data receives the resources it needs at the right time.

Importance of Managing Network Resources

In any organization, especially those that depend on internet-based operations, network resources are as important as physical resources like electricity or machinery. When bandwidth is not managed properly, users may experience delays, dropped connections, or poor application performance. This can directly affect productivity, communication, and customer experience.

Proper bandwidth management ensures that critical business operations are not disrupted. For example, communication tools used for meetings or customer support can be prioritized over background activities like software updates or large file downloads. This prioritization helps maintain a stable working environment even during high network usage periods.

Another important aspect is cost control. Internet service capacity is not unlimited, and organizations often pay based on the level of bandwidth they require. Without proper management, networks may consume more bandwidth than necessary, leading to higher operational costs. Efficient management ensures that resources are used wisely, avoiding waste and unnecessary expansion.

Additionally, bandwidth management contributes to fairness in network usage. In many workplaces, multiple users share the same connection. Without control mechanisms, a few users running heavy applications could slow down the entire network. By distributing bandwidth fairly, organizations ensure that everyone gets a reasonable share of resources.

Understanding the Basics of Bandwidth

Bandwidth can be understood as the maximum amount of data that can be transmitted through a network connection within a specific period of time. It represents the capacity of the network rather than the speed alone. A higher bandwidth means more data can travel simultaneously, resulting in smoother performance for applications that rely on continuous data exchange.

To understand this concept more clearly, it can be compared to a highway system. If a highway has more lanes, it can accommodate more vehicles at the same time, reducing traffic congestion. Similarly, a network with higher bandwidth can handle more data traffic without slowing down.

Bandwidth is commonly measured in units such as kilobits per second, megabits per second, or gigabits per second. These measurements indicate how much data can be transferred in one second. The higher the value, the greater the capacity of the network.

It is important to note that bandwidth is not the same as speed. While bandwidth determines how much data can flow at once, speed refers to how quickly that data is transmitted from one point to another. A network may have high bandwidth but still experience delays if other factors such as congestion or latency are present.

Upstream and Downstream Data Flow

Network traffic is generally divided into two main directions: upstream and downstream. These two categories help in understanding how data moves between a user’s device and the internet.

Upstream traffic refers to data that is sent from a local device to the internet or another network. This includes activities such as uploading files, sending emails with attachments, or sharing content through online platforms. Any action where data leaves the user’s system and moves outward is considered upstream communication.

Downstream traffic, on the other hand, refers to data that is received from the internet or external sources. This includes browsing websites, streaming videos, downloading files, and receiving emails. In most cases, downstream traffic is heavier than upstream because users consume more content than they upload.

Both upstream and downstream activities are important, and effective bandwidth management must consider both directions. If one direction becomes overloaded, it can affect the overall performance of the network. For example, excessive upstream usage from backups or large uploads can slow down downstream activities like video streaming or web browsing.

Balancing both directions ensures that users experience smooth and consistent connectivity regardless of the type of activity they are performing.

Factors That Influence Bandwidth Performance

Several elements can affect how bandwidth performs within a network environment. One of the most significant factors is the underlying network infrastructure. This includes hardware such as routers, switches, and cables. Older or low-quality equipment can limit the overall capacity of the network, even if the internet connection itself is fast.

The type of connection provided by an internet service provider also plays a major role. Different technologies offer different levels of performance. For instance, fiber-based connections generally provide higher and more stable bandwidth compared to older technologies like DSL. The choice of connection directly impacts how much data can be handled at any given time.

Network congestion is another important factor. When too many users or applications attempt to use the network simultaneously, available bandwidth gets divided among them. This can lead to slower performance and delays in data delivery. Without proper management, congestion can significantly reduce efficiency.

Software and application behavior also influence bandwidth usage. Some applications consume large amounts of data in the background without user awareness. These hidden processes can reduce available bandwidth for more important tasks if not properly controlled.

Environmental and configuration factors also contribute. Incorrect network settings, outdated firmware, or poorly optimized systems can reduce performance and create unnecessary bottlenecks. Regular maintenance is essential to keep the network functioning efficiently.

Role of Network Optimization in Modern Systems

In today’s digital environment, organizations rely on multiple applications running simultaneously across shared networks. This makes optimization essential. Without structured control, networks can quickly become overloaded, leading to slow performance and interruptions in service.

Bandwidth management ensures that critical operations are always prioritized. For example, communication systems used for real-time interaction require consistent and stable connections. By giving priority to such applications, organizations can maintain uninterrupted communication even during peak usage times.

Non-essential activities can be controlled or delayed when necessary. This does not mean blocking them completely, but rather managing their usage in a way that they do not interfere with essential operations. This balance helps maintain both productivity and user satisfaction.

Optimization also improves overall efficiency by reducing unnecessary data consumption. When network traffic is properly organized, less bandwidth is wasted, and the system performs more predictably. This leads to better utilization of available resources without requiring constant upgrades.

Understanding Traffic Behavior in Networks

Network traffic is not always consistent. It fluctuates based on user activity, time of day, and application demands. During peak hours, more users access the network, increasing overall load. During off-peak hours, usage decreases, resulting in lighter traffic.

Understanding these patterns is essential for effective bandwidth management. By analyzing how traffic behaves, network administrators can make informed decisions about resource allocation. This helps in preventing congestion and ensuring smooth performance at all times.

Certain applications generate constant traffic, while others operate intermittently. For example, streaming services require continuous data flow, while file downloads occur in bursts. Recognizing these differences helps in designing better management strategies.

Without understanding traffic behavior, it becomes difficult to maintain stability. Random or unmanaged traffic distribution can lead to unpredictable performance issues that affect user experience.

Why Structured Control Becomes Necessary

As networks grow in size and complexity, manual control becomes insufficient. Automated and structured bandwidth management systems become necessary to handle increasing demand. These systems continuously monitor traffic and make adjustments based on predefined rules.

Structured control ensures that no single application or user consumes excessive resources. It also helps maintain consistent performance across the entire network. This is especially important in environments where multiple departments or services depend on shared infrastructure.

By applying structured control mechanisms, organizations can maintain stability even under heavy usage conditions. It ensures that essential operations remain unaffected while still allowing flexibility for general usage.

This controlled environment forms the foundation of efficient digital communication systems and supports long-term scalability as network demands continue to grow.

Traffic Prioritization in Network Systems

Traffic prioritization is one of the core principles of bandwidth management and focuses on deciding which types of data should be given preference when network resources are limited. In any shared network environment, multiple applications compete for bandwidth at the same time. Without prioritization, all data is treated equally, which can lead to delays in critical services and reduced overall performance.

In practical terms, traffic prioritization ensures that important business functions always receive sufficient network resources. For example, real-time communication tools such as voice calls or video conferencing require stable and uninterrupted data flow. If these services are delayed due to background activities like downloads or system updates, communication quality suffers significantly. Prioritization prevents such issues by assigning higher importance to time-sensitive traffic.

Different organizations define “important traffic” based on their operational needs. In a financial institution, transaction processing systems may be prioritized. In a customer service environment, communication platforms may take precedence. This flexibility allows bandwidth management systems to adapt to different business models while maintaining efficiency.

Traffic prioritization is not about blocking other activities. Instead, it is about creating a hierarchy where essential services are guaranteed performance while less critical tasks use remaining resources. This balanced approach ensures both productivity and fairness across the network.

Quality of Service and Its Role in Bandwidth Management

Quality of Service is a fundamental concept in bandwidth management that defines how network resources are allocated to different types of traffic. It is a structured approach that ensures performance consistency by controlling data flow based on predefined rules.

In a network without Quality of Service, all applications compete equally for bandwidth. This can lead to unpredictable performance, especially during peak usage times. Quality of Service introduces order by categorizing traffic and assigning specific handling rules to each category.

One of the key functions of Quality of Service is to guarantee minimum bandwidth levels for critical applications. This means that even if the network becomes heavily loaded, important services will still receive enough resources to function properly. This is particularly important for applications that require real-time communication or continuous data streaming.

Quality of Service also helps reduce latency by prioritizing time-sensitive packets. When data is processed in order of importance, delays are minimized, resulting in smoother and more responsive network performance. This is essential in environments where even small delays can impact user experience or business operations.

Another important aspect is fairness. Quality of Service ensures that no single application can dominate the entire network. By distributing bandwidth intelligently, it maintains balance between different users and services, preventing performance degradation.

Class-Based Traffic Management Systems

Class-based traffic management is a structured method used within bandwidth management systems to organize network traffic into different categories or classes. Each class represents a specific type of data or application, and each is treated according to its importance.

In this system, traffic is first identified and then assigned to a class based on predefined rules. These rules may consider factors such as application type, source or destination address, or protocol used. Once classified, each group is allocated a specific portion of bandwidth.

High-priority classes are typically assigned more resources to ensure smooth performance. These may include business-critical applications, communication tools, or real-time services. Lower-priority classes, such as background updates or non-essential downloads, are assigned remaining resources.

This method allows network administrators to maintain control over how bandwidth is distributed. Instead of treating all traffic equally, class-based management ensures that resources are used in a way that aligns with organizational priorities.

It also provides flexibility. As business needs change, classes can be adjusted without redesigning the entire network. This adaptability makes class-based systems an important component of modern bandwidth management strategies.

Importance of Network Monitoring and Analysis

Effective bandwidth management depends heavily on continuous monitoring and analysis of network activity. Without visibility into how data is being used, it becomes difficult to make informed decisions about resource allocation.

Network monitoring tools provide real-time insights into traffic patterns, bandwidth usage, and application performance. These tools help identify which services consume the most resources and whether any unusual activity is affecting performance.

Analysis of this data allows administrators to detect inefficiencies and optimize network behavior. For example, if a particular application is consuming excessive bandwidth without providing business value, its usage can be restricted or adjusted.

Monitoring also plays a key role in troubleshooting. When network issues arise, detailed traffic data helps identify the root cause quickly. This reduces downtime and improves overall reliability.

In addition, long-term analysis of network usage helps in capacity planning. By understanding how bandwidth requirements evolve over time, organizations can make informed decisions about infrastructure upgrades and resource expansion.

Understanding Bandwidth Monitoring Tools

Bandwidth monitoring tools are essential components of modern network management systems. They provide detailed visibility into how data flows across a network and help administrators make data-driven decisions.

These tools capture and analyze packets of data as they move through the network. By examining this information, they can determine which applications are using the most bandwidth and how traffic is distributed across different users and services.

Some tools focus on real-time monitoring, while others provide historical data analysis. Real-time monitoring is useful for identifying immediate issues such as sudden spikes in traffic. Historical analysis helps in understanding long-term trends and usage patterns.

Advanced monitoring systems can also generate alerts when unusual activity is detected. For example, if bandwidth usage suddenly increases beyond normal levels, administrators can be notified immediately. This allows for quick response and prevents potential disruptions.

These tools are not only useful for troubleshooting but also for optimization. By understanding how bandwidth is being used, organizations can make better decisions about resource allocation and network design.

Bandwidth Allocation Strategies in Practice

Bandwidth allocation is the process of distributing available network capacity among users, applications, or services. It is a critical part of bandwidth management because it directly affects performance and fairness.

In practice, allocation is based on priority levels and usage requirements. Critical applications are assigned a guaranteed portion of bandwidth to ensure consistent performance. Less important services use remaining capacity.

This approach ensures that essential operations are never disrupted, even during periods of high demand. It also prevents inefficient usage where some applications consume more bandwidth than necessary.

Dynamic allocation is also used in many modern systems. In this method, bandwidth is adjusted in real time based on current network conditions. If one application is not using its full allocation, the unused capacity can be temporarily assigned to other services.

This flexible approach improves overall efficiency and ensures that resources are always utilized effectively. It also helps in maintaining balance during unpredictable traffic conditions.

Traffic Shaping as a Control Mechanism

Traffic shaping is a technique used to control the flow of data in a network by regulating how and when packets are transmitted. Instead of allowing data to flow freely, traffic shaping introduces structure and timing to ensure smooth performance.

The main goal of traffic shaping is to prevent sudden bursts of data that can overwhelm the network. By controlling the rate at which data is sent, it reduces congestion and improves stability.

This technique works by buffering or delaying packets when necessary. Data is released into the network at a controlled rate, ensuring that traffic remains within acceptable limits.

Traffic shaping is particularly useful in environments where multiple applications generate varying levels of traffic. It ensures that no application can overload the system by sending excessive data at once.

By smoothing out traffic flow, it improves overall network performance and reduces the likelihood of bottlenecks.

Bandwidth Throttling and Controlled Limitation

Bandwidth throttling is a method used to intentionally limit the amount of bandwidth available to certain applications, users, or devices. Unlike prioritization, which focuses on giving preference, throttling focuses on restriction.

This technique is used when certain activities consume more resources than necessary or negatively impact overall performance. By limiting their bandwidth usage, the network can maintain balance and stability.

Throttling can be applied temporarily or permanently depending on the situation. It may be used during peak usage times or as a long-term policy for non-essential services.

One of the key benefits of throttling is fairness. It ensures that no single user or application can dominate network resources. This helps maintain consistent performance for everyone connected to the network.

Throttling also supports better resource planning by preventing unpredictable spikes in usage. It creates a more controlled and manageable network environment.

Balancing Control and Flexibility in Network Systems

Effective bandwidth management requires a balance between strict control and operational flexibility. While control mechanisms are necessary to maintain stability, too much restriction can reduce efficiency and user satisfaction.

Modern systems aim to achieve this balance by using adaptive techniques. These systems adjust bandwidth allocation dynamically based on real-time conditions. This ensures that resources are used efficiently without unnecessary limitations.

Flexibility is important because network demands are constantly changing. Users may require more bandwidth at certain times, while at other times demand may be low. Adaptive management allows the system to respond to these changes effectively.

At the same time, control mechanisms ensure that critical operations are never compromised. This combination of control and flexibility forms the foundation of efficient network performance management.

Advanced Bandwidth Optimization Techniques

Bandwidth optimization techniques focus on improving how efficiently a network uses its available capacity without necessarily increasing the total bandwidth. In many real-world environments, simply upgrading bandwidth is not always practical or cost-effective. Instead, optimization ensures that existing resources are used in the most efficient way possible.

One of the most important optimization methods is data compression. Compression reduces the size of data before it is transmitted across the network. Smaller data packets require less bandwidth, which helps improve transmission speed and reduces congestion. This is especially useful in environments where large files are frequently transferred.

Another technique involves caching. Caching stores frequently accessed data closer to the user or within the local network. When the same data is requested again, it is delivered from the cache instead of being downloaded repeatedly from external sources. This significantly reduces bandwidth consumption and improves response time.

Protocol optimization is also an important approach. Different communication protocols handle data in different ways, and some are more efficient than others. By selecting optimized protocols or fine-tuning existing ones, organizations can reduce unnecessary overhead and improve overall performance.

Load balancing is another critical technique. It distributes network traffic evenly across multiple servers or pathways. Instead of allowing a single route or server to become overloaded, load balancing ensures that traffic is shared efficiently, preventing bottlenecks and maintaining stability.

Together, these techniques create a more efficient network environment where bandwidth is used intelligently rather than wasted.

Deep Dive into Quality of Service Mechanisms

Quality of Service is not a single function but a combination of mechanisms that work together to manage network performance. These mechanisms include classification, prioritization, scheduling, and queuing.

Classification is the first step, where network traffic is identified and grouped based on predefined rules. This allows the system to understand what type of data is being transmitted and how important it is. Without classification, all traffic would be treated the same, making prioritization impossible.

Once classified, traffic is prioritized. This ensures that important data is processed before less important data. For example, real-time communication traffic is typically given higher priority than background system updates.

Scheduling determines the order in which packets are transmitted. Even if multiple types of traffic are present, scheduling ensures that high-priority packets are sent first. This reduces delays and improves responsiveness for critical applications.

Queuing is used to manage data that cannot be sent immediately. When the network is busy, packets are placed in queues based on their priority level. High-priority queues are processed faster, while lower-priority queues may experience slight delays.

These mechanisms work together to create a controlled and predictable network environment. Instead of random data flow, traffic is organized in a structured manner that aligns with business needs and performance goals.

Enterprise-Level Bandwidth Management Approaches

In large organizations, bandwidth management becomes significantly more complex due to the scale of operations and the diversity of applications being used. Enterprise-level approaches focus on centralized control, automation, and scalability.

Centralized management systems allow administrators to control network policies from a single point. This makes it easier to implement consistent rules across multiple departments, offices, or locations. Instead of managing each segment separately, everything is governed under a unified framework.

Automation plays a major role in enterprise environments. Automated systems can continuously monitor network conditions and adjust bandwidth allocation without manual intervention. This ensures real-time responsiveness to changing demands and reduces the risk of human error.

Scalability is another important factor. As organizations grow, their network requirements also increase. Enterprise-level bandwidth management systems are designed to scale with demand, allowing additional users, devices, and applications to be integrated without disrupting existing operations.

Policy enforcement is also critical in enterprise systems. Clear rules are established regarding how bandwidth should be used, and these rules are enforced automatically. This ensures compliance and prevents misuse of network resources.

Role of Network Infrastructure in Performance Control

Network infrastructure forms the foundation of bandwidth management. The quality and configuration of hardware components directly influence how effectively bandwidth is utilized.

Routers play a key role in directing traffic between different networks. High-performance routers can process large volumes of data more efficiently, reducing delays and improving throughput. Poorly configured routers, however, can become bottlenecks that slow down the entire system.

Switches are responsible for managing traffic within local networks. They ensure that data is delivered to the correct destination efficiently. Advanced switches support features such as traffic segmentation and prioritization, which are essential for effective bandwidth control.

Cabling and physical connections also impact performance. High-quality cables reduce signal loss and support higher data transfer rates. Outdated or damaged cables can significantly limit network capacity, even if other components are optimized.

Network topology, or the way devices are connected, also influences performance. A well-designed topology reduces unnecessary data paths and improves efficiency. Poor design can lead to congestion and uneven distribution of traffic.

All these components must work together to create a stable and efficient network environment where bandwidth management strategies can be effectively implemented.

Understanding Latency and Its Relationship with Bandwidth

Latency refers to the delay between sending and receiving data across a network. While bandwidth determines how much data can be transmitted, latency determines how quickly that data reaches its destination.

High bandwidth does not always guarantee low latency. A network may be capable of transmitting large amounts of data, but if delays are present, performance will still be affected. This is why both factors must be managed together.

Latency is influenced by several factors, including distance between devices, network congestion, and processing delays within network hardware. Longer distances naturally increase latency because data takes more time to travel.

Congestion also plays a major role. When too much traffic is present, packets may need to wait in queues before being transmitted. This waiting time increases latency and affects real-time applications.

Bandwidth management helps reduce latency by controlling traffic flow and prioritizing important data. By ensuring that critical packets are transmitted first, delays are minimized and responsiveness is improved.

Impact of Application Behavior on Network Load

Different applications use bandwidth in different ways, and understanding their behavior is essential for effective management. Some applications generate constant traffic, while others create intermittent bursts of data.

Streaming applications, for example, require continuous data flow to maintain smooth playback. These applications are sensitive to interruptions and require stable bandwidth allocation.

File transfer applications, on the other hand, often generate large bursts of traffic over short periods. Without control, they can temporarily consume a large portion of available bandwidth, affecting other services.

Cloud-based applications also contribute significantly to network load. These applications constantly synchronize data between local devices and remote servers, creating ongoing background traffic.

Uncontrolled application behavior can lead to unpredictable network performance. Bandwidth management ensures that such applications are regulated so that they do not negatively impact overall system stability.

Adaptive Bandwidth Allocation in Dynamic Networks

Modern networks often use adaptive bandwidth allocation to respond to changing conditions in real time. Instead of fixed distribution, bandwidth is dynamically adjusted based on current demand.

When demand increases, critical applications are given additional resources to maintain performance. When demand decreases, unused bandwidth is redistributed to other services or reserved for future use.

This adaptive approach improves efficiency by ensuring that resources are never wasted. It also enhances user experience by maintaining consistent performance even during fluctuating traffic conditions.

Machine-based systems are increasingly used to support adaptive allocation. These systems analyze traffic patterns and automatically adjust settings based on historical and real-time data.

This creates a self-adjusting network environment that requires minimal manual intervention while maintaining optimal performance levels across all conditions.

Role of Security in Bandwidth Control Systems

Security is closely connected to bandwidth management because unauthorized or malicious activity can consume large amounts of network resources. Protecting the network ensures that bandwidth is used only for legitimate purposes.

One important aspect is traffic encryption. Encrypted data helps protect sensitive information during transmission, but it can also increase bandwidth usage due to additional processing overhead.

Access control mechanisms ensure that only authorized users and devices can access network resources. By limiting access, unnecessary traffic is reduced and bandwidth is preserved for legitimate use.

Network segmentation divides the network into smaller controlled sections. This helps isolate traffic and prevent congestion from spreading across the entire system.

Security monitoring tools detect unusual activity such as sudden spikes in traffic, which may indicate attacks or misuse. Early detection helps prevent bandwidth exhaustion and maintains system stability.

Importance of Real-Time Decision Making in Networks

Real-time decision making is essential in modern bandwidth management systems because network conditions change rapidly. Static configurations are not sufficient to handle dynamic environments.

Real-time systems continuously analyze traffic and make adjustments instantly. This allows the network to respond immediately to congestion, spikes in usage, or unexpected demand.

Decision-making systems rely on predefined rules combined with live data analysis. This ensures that responses are both fast and accurate, maintaining stability without manual intervention.

Such systems improve reliability by preventing small issues from escalating into major disruptions. They also ensure that critical services remain operational even under heavy load conditions.

Bandwidth Management in Cloud-Based Environments

Cloud computing has significantly changed how bandwidth is managed because data is no longer confined to local systems. Instead, applications, storage, and services operate across distributed cloud infrastructures. This shift has made bandwidth management more complex and more important than ever.

In cloud environments, data constantly moves between users and remote servers. This continuous exchange requires careful coordination to avoid congestion and ensure smooth performance. Without proper management, cloud applications can experience delays, slow synchronization, and reduced responsiveness.

One of the key aspects of cloud-based bandwidth management is scalability. Cloud systems must handle varying levels of demand, often increasing or decreasing within short periods. Bandwidth management ensures that resources automatically adjust based on real-time usage requirements.

Another important factor is multi-tenancy. In cloud systems, multiple users or organizations often share the same infrastructure. Bandwidth must be distributed fairly so that one user does not negatively affect the performance of others. This requires strict control policies and intelligent allocation systems.

Cloud platforms also rely heavily on data redundancy and replication. While these improve reliability, they also increase bandwidth consumption. Efficient management ensures that replication processes do not interfere with active user traffic or critical operations.

Overall, cloud environments demand highly dynamic bandwidth management strategies that can adapt instantly to changing workloads and ensure consistent performance across distributed systems.

Role of Virtualization in Bandwidth Control

Virtualization introduces another layer of complexity in network management by allowing multiple virtual machines and applications to run on a single physical infrastructure. Each virtual environment competes for shared bandwidth resources, making control essential.

In virtualized systems, bandwidth is often allocated to virtual machines based on priority and workload requirements. This ensures that critical virtual services receive sufficient resources while less important ones operate within controlled limits.

Virtual switches play an important role in managing traffic between virtual machines. They handle data flow internally within the host system and ensure that communication remains efficient without overloading the physical network.

Resource isolation is another key benefit of virtualization. It allows administrators to separate workloads so that one virtual machine cannot interfere with another. This improves stability and ensures predictable performance.

Dynamic resource allocation is commonly used in virtual environments. Bandwidth can be increased or decreased for specific virtual machines depending on demand, allowing efficient utilization of physical infrastructure.

Virtualization has made bandwidth management more flexible but also more complex, requiring advanced monitoring and control mechanisms.

Impact of Remote Work on Bandwidth Demand

The rise of remote work has significantly increased dependence on stable and efficient bandwidth management systems. Employees working from different locations rely heavily on internet-based tools for communication, collaboration, and file sharing.

Video conferencing has become one of the most bandwidth-intensive activities in remote work environments. It requires continuous, high-quality data transmission to maintain clear audio and video communication. Without proper prioritization, call quality can degrade quickly.

Cloud-based collaboration tools also contribute to increased bandwidth usage. These tools constantly synchronize files, updates, and shared documents, creating continuous background traffic.

Remote access to corporate systems adds another layer of demand. Employees accessing internal systems from external networks require secure and stable connections, which increases overall bandwidth consumption.

Bandwidth management ensures that remote workers receive consistent performance regardless of their location. By prioritizing essential communication tools and optimizing traffic flow, organizations can maintain productivity across distributed teams.

This shift has made bandwidth management a critical part of modern workplace infrastructure rather than just an IT support function.

Artificial Intelligence in Bandwidth Optimization

Artificial intelligence is increasingly being used to enhance bandwidth management by enabling systems to learn from network behavior and make intelligent decisions automatically.

AI-based systems analyze large volumes of network data to identify patterns in traffic usage. Based on these patterns, they can predict future demand and adjust bandwidth allocation in advance.

One of the key advantages of AI is its ability to detect anomalies. If unusual traffic behavior is detected, such as sudden spikes or unexpected usage patterns, the system can respond immediately to prevent congestion or potential security threats.

AI also improves efficiency by continuously optimizing resource distribution. Instead of relying on fixed rules, AI systems adapt dynamically based on real-time conditions.

Predictive analysis is another important feature. By studying historical data, AI can forecast peak usage times and prepare the network accordingly. This helps prevent performance issues before they occur.

As networks become more complex, AI-driven bandwidth management is becoming essential for maintaining stability and performance at scale.

Emerging Trends in Network Traffic Control

Modern bandwidth management is evolving rapidly due to advancements in technology and changing user demands. Several emerging trends are shaping the future of network traffic control.

One major trend is the shift toward software-defined networking. This approach separates network control from physical hardware, allowing administrators to manage traffic through software-based systems. It provides greater flexibility and easier configuration of bandwidth policies.

Another trend is edge computing. Instead of processing all data in centralized locations, edge computing processes data closer to the source. This reduces latency and reduces the load on central networks, improving overall efficiency.

5G technology is also influencing bandwidth management. With significantly higher speeds and lower latency, 5G enables more efficient handling of large volumes of data. However, it also increases demand, requiring more advanced control mechanisms.

Automation continues to expand as networks become more complex. Automated systems are replacing manual configuration processes, allowing real-time adjustments and reducing operational overhead.

These trends indicate a shift toward more intelligent, decentralized, and adaptive network systems.

Challenges in Large-Scale Bandwidth Management

Managing bandwidth at a large scale introduces several challenges that require careful planning and execution. One of the primary challenges is complexity. As networks grow, the number of devices, applications, and users increases significantly, making it harder to maintain control.

Another challenge is unpredictable traffic patterns. Sudden spikes in usage can occur without warning, especially in environments with global users or real-time applications. This unpredictability makes planning difficult.

Resource limitations also present a challenge. Even with advanced infrastructure, bandwidth is still finite. Organizations must constantly balance demand with available capacity.

Compatibility issues between different systems and technologies can also create inefficiencies. Integrating new tools into existing networks requires careful configuration to avoid disruptions.

Security concerns add another layer of difficulty. Ensuring that bandwidth is not consumed by unauthorized activity requires constant monitoring and enforcement of security policies.

Despite these challenges, modern tools and techniques continue to improve the effectiveness of large-scale bandwidth management systems.

Future of Bandwidth Management Systems

The future of bandwidth management is moving toward greater automation, intelligence, and integration. As networks continue to grow in complexity, traditional manual methods will become less effective.

Future systems will rely heavily on machine learning and artificial intelligence to manage traffic automatically. These systems will not only react to network conditions but also predict them and take proactive actions.

Integration with cloud platforms will become deeper, allowing seamless coordination between local and remote resources. This will improve efficiency and reduce latency across distributed systems.

Another important development will be the increased use of real-time analytics. Networks will continuously analyze performance data and adjust configurations instantly to maintain optimal performance.

Security will also become more tightly integrated with bandwidth management. Future systems will automatically detect and respond to threats while maintaining performance stability.

Overall, the future points toward highly intelligent, self-managing networks that require minimal human intervention.

Final Conclusion

Bandwidth management is a critical component of modern networking that ensures efficient, stable, and fair distribution of data across systems. It involves controlling how network resources are used, prioritizing important traffic, and optimizing performance to meet organizational needs.

Through techniques such as traffic prioritization, quality of service, traffic shaping, and bandwidth allocation, networks can maintain high performance even under heavy demand. Monitoring tools and intelligent systems further enhance visibility and control.

As technology continues to evolve, bandwidth management is becoming more advanced, incorporating artificial intelligence, cloud computing, and automation. These developments are making networks more adaptive, efficient, and reliable.

Ultimately, effective bandwidth management is essential for maintaining smooth communication, supporting business operations, and ensuring a high-quality user experience in an increasingly connected digital world.