Linux offers a diverse range of file systems, and that flexibility is one of the core reasons it powers everything from personal laptops to enterprise-grade servers and high-performance computing systems. A file system defines how data is stored, organized, and retrieved on storage devices, directly influencing performance, reliability, and scalability. Understanding the differences between major file systems is essential for making informed decisions, whether you are managing a simple desktop environment or deploying a large-scale infrastructure.

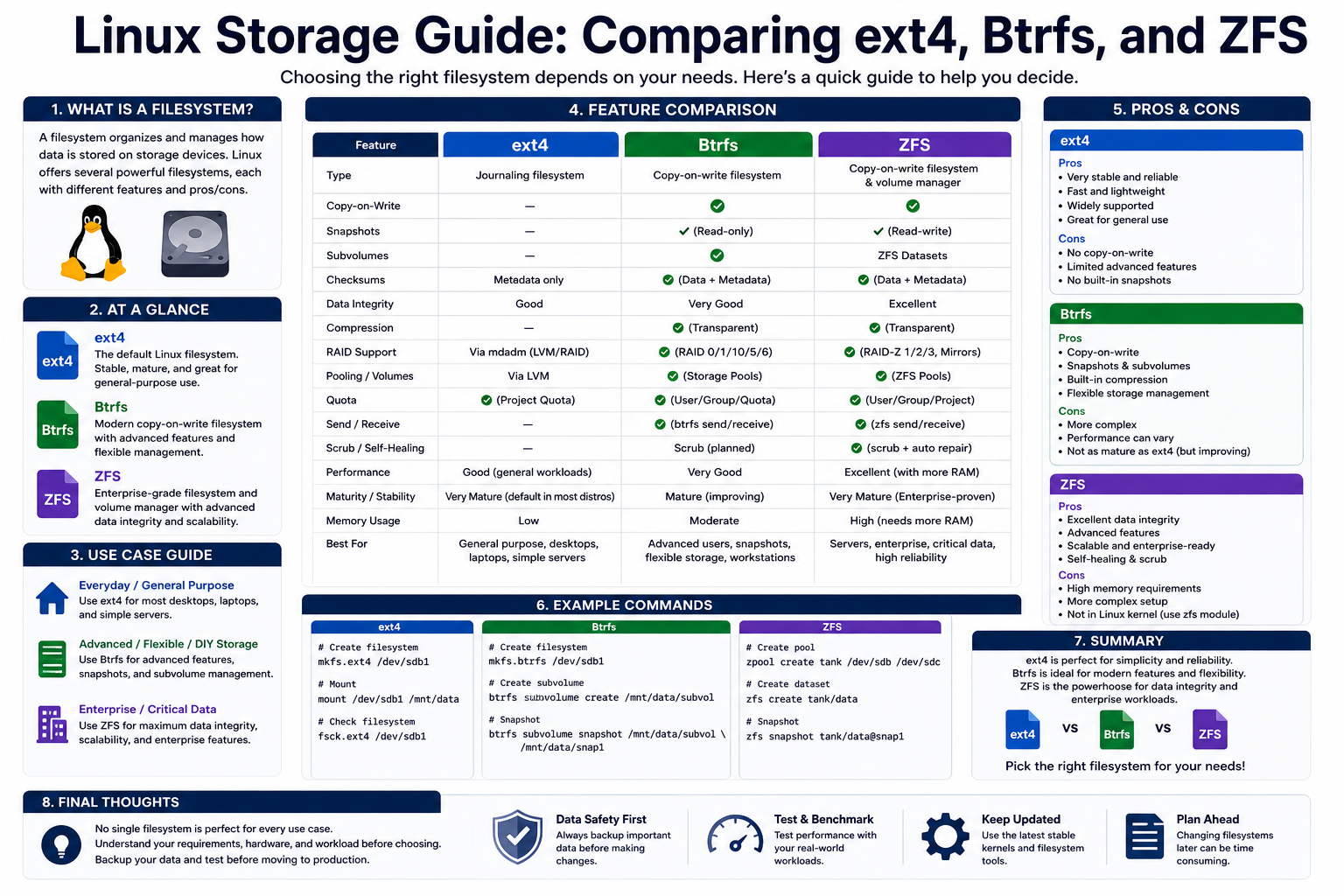

Among the many available options, ext4, Btrfs, ZFS, and XFS stand out as some of the most widely used and discussed. Each one is designed with different priorities in mind. Some focus on stability and simplicity, while others emphasize advanced data protection, scalability, or high-speed performance. Choosing the right file system is not just a technical preference—it shapes how efficiently your system operates and how well your data is protected over time.

File systems are more than just storage mechanisms. They determine how quickly files can be accessed, how resilient your data is against corruption, and how easily your storage can scale as your needs grow. For example, a developer working on a personal Linux machine may prioritize reliability and ease of use, while an enterprise administrator managing petabytes of data may need features like snapshots, redundancy, and self-healing capabilities. This is where understanding the strengths and trade-offs of each file system becomes crucial.

Before diving into comparisons, it helps to grasp some foundational concepts. File systems manage metadata, which includes information such as file names, permissions, timestamps, and storage locations. They also control how data blocks are allocated and how fragmentation is handled. Modern file systems introduce additional layers of intelligence, such as journaling, copy-on-write mechanisms, and data integrity verification, all of which contribute to improved reliability and performance.

As Linux has evolved, so have its file systems. Early designs focused primarily on basic storage needs, but modern systems incorporate advanced features to meet the demands of virtualization, cloud computing, and large-scale data processing. This evolution reflects the growing importance of data integrity, scalability, and performance in today’s computing environments.

In the sections that follow, we will explore ext4, Btrfs, ZFS, and XFS in depth. Each file system represents a different approach to solving common storage challenges, and understanding these approaches will help you choose the most suitable option for your specific use case.

Understanding the Role of File Systems in Linux

A file system acts as the bridge between the operating system and the physical storage hardware. Without it, data would exist as an unstructured collection of bits, making it impossible to store or retrieve information in a meaningful way. In Linux, the file system is deeply integrated into the operating system’s architecture, influencing everything from boot processes to application performance.

At its core, a file system organizes data into files and directories, creating a hierarchical structure that users and applications can navigate. This structure allows for efficient data management and retrieval, but the underlying implementation varies significantly between different file systems. These differences can affect how quickly files are accessed, how storage space is utilized, and how resilient the system is to failures.

One of the most important responsibilities of a file system is managing data consistency. When a system crashes or loses power unexpectedly, there is a risk that data being written at that moment could become corrupted. To address this, many modern file systems use journaling or similar techniques. Journaling records changes before they are applied, allowing the system to recover quickly and maintain consistency after a crash.

Another critical aspect is scalability. As storage requirements grow, the file system must be able to handle larger volumes and more files without degrading performance. Older file systems often struggled with this, but newer designs incorporate advanced data structures and allocation strategies to maintain efficiency even at massive scales.

Performance is also heavily influenced by the file system. Factors such as how data is written to disk, how fragmentation is handled, and how metadata is managed all play a role. Some file systems are optimized for small files and general-purpose use, while others are designed for large, sequential data transfers or parallel workloads.

Data integrity has become an increasingly important consideration. Modern file systems like Btrfs and ZFS include mechanisms to detect and correct data corruption automatically. These features are especially valuable in environments where data reliability is critical, such as financial systems, scientific research, and cloud storage platforms.

In addition to these technical considerations, ease of use and maintenance are also important. Some file systems require minimal configuration and work reliably out of the box, while others offer advanced features that may require careful tuning and management. The choice often depends on the user’s expertise and the complexity of the environment.

By understanding these fundamental roles, it becomes easier to appreciate why different file systems exist and how they cater to different needs. Each design represents a balance between simplicity, performance, and advanced functionality.

Ext4 as a Reliable and Established Standard

Ext4 is one of the most widely used file systems in the Linux world, and for good reason. It builds upon the legacy of earlier extended file systems, refining and improving their capabilities while maintaining compatibility and stability. For many users, ext4 serves as the default choice because it offers a dependable balance of performance, reliability, and simplicity.

One of the defining characteristics of ext4 is its maturity. It has been tested extensively over many years, making it a trusted option for both desktop and server environments. This long history has allowed developers to identify and fix issues, resulting in a stable and predictable file system that performs well under a wide range of conditions.

Journaling is a key feature that contributes to ext4’s reliability. By recording changes before they are applied, the file system can recover quickly from crashes or power failures. This reduces the risk of data corruption and ensures that the system remains consistent. While journaling primarily protects metadata rather than actual file contents, it still provides a significant level of resilience.

Ext4 also introduces improvements in storage efficiency through the use of extents. Instead of tracking individual data blocks, extents allow the file system to manage contiguous ranges of blocks more effectively. This reduces fragmentation and improves performance, especially when dealing with large files. As a result, the system spends less time searching for data and more time processing it.

Another advantage of ext4 is its support for large files and file systems. With the ability to handle files up to several terabytes and file systems reaching into exabyte ranges, it is well-suited for modern storage needs. This scalability ensures that ext4 remains relevant even as storage capacities continue to grow.

Delayed allocation is another feature that enhances performance. By postponing the allocation of disk space until data is actually written, ext4 can make more informed decisions about where to place data. This reduces fragmentation and improves overall efficiency, particularly in workloads involving frequent file updates.

Despite its strengths, ext4 does have limitations. It lacks some of the advanced features found in newer file systems, such as built-in snapshots and comprehensive data integrity checks. For many users, however, these features are not essential, and the simplicity of ext4 becomes an advantage rather than a drawback.

In practice, ext4 is often chosen for general-purpose use. It performs reliably in everyday computing scenarios, from personal desktops to web servers. Its straightforward design and minimal maintenance requirements make it an attractive option for users who prioritize stability and ease of use over cutting-edge features.

Btrfs as a Modern and Feature-Rich Alternative

Btrfs represents a new generation of Linux file systems designed to address the limitations of traditional approaches. It introduces advanced features that focus on data integrity, flexibility, and efficient storage management. For users seeking modern capabilities, Btrfs offers a compelling alternative to more established file systems like ext4.

One of the most notable features of Btrfs is its use of copy-on-write technology. Instead of modifying data in place, the file system writes changes to new locations, preserving the original data until the operation is complete. This approach enables the creation of snapshots, which capture the state of the file system at a specific point in time. Snapshots are extremely useful for backups, system recovery, and testing changes without risking data loss.

Data integrity is another area where Btrfs excels. It uses checksums to verify both data and metadata, ensuring that any corruption can be detected. This is particularly important in environments where data reliability is critical. By identifying errors early, Btrfs helps prevent silent data corruption, which can otherwise go unnoticed until it causes significant problems.

Compression is also built into Btrfs, allowing files to be stored more efficiently. By reducing the amount of space required for data, compression not only increases storage capacity but can also improve performance in certain scenarios. Modern processors are capable of handling compression tasks efficiently, making this feature practical for everyday use.

Btrfs is designed with scalability in mind. Its architecture allows it to handle large volumes of data and adapt to changing storage requirements. This makes it suitable for a wide range of applications, from personal systems to enterprise environments. Additionally, its ability to manage multiple devices within a single file system adds a layer of flexibility that is not present in traditional designs.

However, Btrfs is not without challenges. While it offers powerful features, it can be more complex to configure and manage compared to simpler file systems. Certain configurations, particularly those involving advanced RAID setups, may require careful tuning to achieve optimal performance and stability.

Despite these considerations, Btrfs continues to gain popularity as a modern file system that bridges the gap between simplicity and advanced functionality. It provides many of the features that users expect in contemporary computing environments while remaining integrated within the Linux ecosystem.

Its ability to combine snapshots, data integrity, and efficient storage management makes it particularly appealing for users who need more than just basic file storage. Whether used for development, system backups, or large-scale data management, Btrfs offers a versatile and forward-looking solution.

ZFS as an Advanced Data Management Solution

ZFS stands out as one of the most sophisticated file systems available in the Linux ecosystem, designed with a strong emphasis on data integrity, scalability, and integrated storage management. Originally developed for enterprise environments, it brings together the roles of a traditional file system and a volume manager into a single, unified solution. This integration allows for greater control over storage devices and eliminates many of the complexities associated with managing separate layers of storage infrastructure.

One of the defining principles behind ZFS is its focus on maintaining data integrity at all times. Unlike traditional file systems that rely heavily on the underlying hardware for reliability, ZFS takes a proactive approach by verifying data at every step. It uses end-to-end checksums to ensure that data written to disk is exactly the same when it is read back. If any discrepancy is detected, ZFS can automatically repair the corrupted data using redundant copies, provided that redundancy has been configured.

This self-healing capability is particularly valuable in environments where data corruption can have serious consequences. Silent data corruption, sometimes referred to as bit rot, is a subtle but dangerous issue that can go undetected in many file systems. ZFS addresses this problem directly by continuously validating data and correcting errors as they occur, ensuring a high level of reliability over time.

Another major advantage of ZFS is its support for snapshots and clones. Snapshots allow users to capture the state of the file system at a specific moment, enabling quick recovery from accidental deletions or system changes. These snapshots are highly efficient because they only store differences between versions rather than duplicating entire datasets. Clones extend this concept by allowing writable copies of snapshots, making them useful for testing, development, and backup scenarios.

ZFS also introduces a unique approach to storage management through its use of storage pools. Instead of managing individual disks or partitions, ZFS aggregates multiple devices into a single pool of storage. This pool can then be dynamically allocated to different file systems as needed, providing flexibility and simplifying administration. Adding new storage capacity is as simple as incorporating additional devices into the pool, allowing the system to scale seamlessly.

RAID-Z is another key feature that enhances ZFS’s reliability. Unlike traditional RAID implementations, RAID-Z is designed to avoid common issues such as the write hole problem. It provides various levels of redundancy, enabling the system to withstand one or more disk failures without losing data. This makes ZFS an excellent choice for environments where uptime and data protection are critical.

Despite its many advantages, ZFS does come with certain considerations. It requires more system resources than simpler file systems, particularly in terms of memory. Proper configuration and hardware planning are essential to fully leverage its capabilities. Additionally, due to licensing differences, ZFS is not included directly in the Linux kernel, which means it must be installed and managed separately.

Even with these challenges, ZFS remains a powerful option for users who need advanced storage features and robust data protection. Its combination of integrity checking, scalability, and integrated management makes it a preferred choice for enterprise systems, data centers, and storage-heavy applications.

XFS as a High-Performance File System

XFS is designed with performance and scalability as its primary goals, making it particularly well-suited for demanding workloads that involve large files and high levels of parallel input and output operations. Originally developed for high-end computing environments, it has since become a popular choice in enterprise Linux distributions due to its ability to handle intensive data processing tasks efficiently.

One of the key strengths of XFS is its ability to deliver consistent performance under heavy workloads. It is optimized for handling large, sequential data transfers, which are common in applications such as video processing, scientific simulations, and big data analytics. This focus on throughput allows XFS to outperform many other file systems in scenarios where speed and efficiency are critical.

XFS achieves its performance advantages through the use of advanced data structures, including B+ trees for managing metadata. These structures enable the file system to track free space and allocate storage efficiently, even as the size of the file system grows. This scalability ensures that performance remains stable, whether the system is managing a few gigabytes or several petabytes of data.

Metadata journaling is another feature that contributes to XFS’s reliability. By logging changes to metadata before they are applied, the file system can recover quickly from unexpected shutdowns or crashes. While this approach does not provide the same level of data integrity protection as systems like ZFS or Btrfs, it strikes a balance between reliability and performance.

XFS also supports online resizing, allowing administrators to expand the file system without taking it offline. This capability is particularly useful in environments where downtime must be minimized. However, it is important to note that XFS does not support shrinking, which means careful planning is required when allocating storage space.

Another notable aspect of XFS is its ability to handle parallel workloads effectively. It is designed to take advantage of modern multi-core processors and high-speed storage devices, enabling multiple operations to occur simultaneously without significant performance degradation. This makes it an ideal choice for systems that run databases, virtual machines, or other resource-intensive applications.

While XFS excels in performance, it does not include some of the advanced features found in newer file systems. For example, it lacks built-in support for snapshots and comprehensive data integrity checks. As a result, it may not be the best choice for environments where data protection is the highest priority.

Nevertheless, XFS remains a strong contender for scenarios where speed and scalability are paramount. Its ability to handle large files and sustain high throughput makes it a reliable option for demanding workloads, particularly in enterprise and high-performance computing environments.

Comparing Design Philosophies and Core Approaches

Each of the major Linux file systems is built around a distinct design philosophy, reflecting different priorities and use cases. Understanding these underlying approaches provides valuable insight into why certain file systems perform better in specific scenarios and how they address common challenges in data storage and management.

Ext4 represents a conservative and evolutionary approach. It builds upon earlier designs, refining existing features rather than introducing radical changes. This focus on stability and backward compatibility makes it a dependable choice for general-purpose use. Its design prioritizes simplicity and reliability, ensuring that it performs consistently across a wide range of environments without requiring extensive configuration.

Btrfs, on the other hand, takes a more forward-looking approach. It is designed to incorporate modern features that address the limitations of traditional file systems. By using copy-on-write mechanisms, checksums, and integrated volume management capabilities, Btrfs aims to provide a comprehensive solution for data integrity and flexibility. Its design reflects the needs of contemporary computing environments, where data protection and efficient storage utilization are increasingly important.

ZFS goes even further by rethinking the role of a file system entirely. Instead of treating storage management and data organization as separate concerns, it combines them into a single, cohesive system. This integration allows for advanced features such as self-healing data, dynamic storage pools, and efficient snapshotting. ZFS is designed with enterprise-level requirements in mind, prioritizing data integrity and scalability above all else.

XFS focuses primarily on performance and scalability. Its design is optimized for handling large volumes of data and supporting high-throughput workloads. By emphasizing efficient data allocation and parallel processing, XFS delivers exceptional speed in the right environments. However, this focus on performance comes at the expense of some advanced features found in other file systems.

These differing philosophies highlight the trade-offs involved in file system design. A system that excels in performance may lack advanced data protection features, while one that prioritizes integrity may require more resources and careful management. The choice ultimately depends on the specific needs of the user and the environment in which the file system will be deployed.

Another important consideration is how these file systems handle growth and change. Modern computing environments are dynamic, with storage requirements evolving over time. File systems like Btrfs and ZFS are designed to adapt to these changes, offering flexible storage management and easy scalability. In contrast, more traditional systems like ext4 and XFS may require more planning and manual intervention to achieve similar results.

By examining these core approaches, it becomes clear that there is no one-size-fits-all solution. Each file system offers a unique combination of features and trade-offs, making it suitable for different types of workloads and use cases.

Evaluating Performance, Reliability, and Use Cases

When selecting a file system, it is essential to consider how it performs under real-world conditions and how well it meets the specific requirements of your workload. Performance, reliability, and intended use cases all play a significant role in determining the most appropriate choice.

Ext4 is often favored for its balanced performance and reliability. It handles everyday tasks efficiently, making it suitable for desktops, small servers, and general-purpose applications. Its low overhead and minimal resource requirements allow it to perform well even on modest hardware. For users who need a stable and predictable environment, ext4 remains a dependable option.

Btrfs offers a different set of advantages, particularly in scenarios where data integrity and flexibility are important. Its snapshot capabilities make it ideal for systems that require frequent backups or the ability to roll back changes بسهولة. Developers and system administrators often use Btrfs to manage complex environments, as it provides tools for maintaining and protecting data without relying on external solutions.

ZFS is best suited for environments where data protection is the top priority. Its advanced features make it a strong choice for storage servers, backup systems, and enterprise applications. The ability to detect and repair data corruption automatically ensures a high level of reliability, even in large-scale deployments. However, its resource requirements mean that it is typically used on systems with sufficient memory and processing power.

XFS excels in performance-oriented scenarios. It is commonly used in applications that involve large files and high levels of parallel processing. Media production, scientific research, and data analytics are all areas where XFS can deliver significant benefits. Its ability to maintain consistent performance under heavy workloads makes it a valuable tool for high-demand environments.

Reliability is another critical factor to consider. While all modern file systems include mechanisms to handle failures, the level of protection varies. Ext4 and XFS rely on journaling to maintain consistency, while Btrfs and ZFS incorporate more advanced techniques such as checksums and self-healing. These differences can have a significant impact on data safety, particularly in environments where data integrity is critical.

Ultimately, the choice of file system depends on the specific needs of the user. A home user may prioritize simplicity and ease of use, while an enterprise administrator may require advanced features and scalability. By carefully evaluating the strengths and limitations of each option, it is possible to select a file system that aligns with your goals and ensures optimal performance and reliability.

Storage Efficiency and Space Management Techniques

Efficient use of storage is a critical factor when evaluating file systems, especially as data volumes continue to grow rapidly across modern computing environments. Each file system approaches space management differently, using unique techniques to balance performance, capacity, and reliability. Understanding these methods provides deeper insight into how ext4, Btrfs, ZFS, and XFS behave under various workloads.

Ext4 relies on a relatively traditional block allocation strategy, enhanced by features like extents and delayed allocation. Extents allow contiguous blocks of data to be grouped together, reducing fragmentation and improving read and write speeds. Delayed allocation further refines this process by postponing block assignment until the system has a clearer picture of how much data needs to be written. This reduces wasted space and helps maintain overall efficiency, particularly in environments with frequent file modifications.

Btrfs introduces a more dynamic approach through its copy-on-write mechanism. Instead of overwriting existing data, it writes new data to a different location and updates metadata accordingly. While this approach improves data safety and enables features like snapshots, it can also lead to increased fragmentation over time if not managed carefully. However, Btrfs compensates for this with built-in defragmentation tools and transparent compression, which helps reduce the overall storage footprint.

Compression in Btrfs is particularly noteworthy because it operates automatically and does not require manual intervention once enabled. By reducing the size of stored data, it effectively increases available space and can even improve performance in scenarios where disk I/O is a bottleneck. This makes Btrfs an attractive option for systems where storage efficiency is a priority.

ZFS takes storage management to another level by integrating volume management directly into the file system. Instead of dealing with fixed partitions, users work with storage pools that can dynamically allocate space as needed. This eliminates many of the limitations associated with traditional partitioning and allows for more flexible and efficient use of available storage.

ZFS also employs copy-on-write, similar to Btrfs, but enhances it with additional mechanisms to ensure consistency and reduce fragmentation. Its intelligent caching system, combined with features like deduplication and compression, enables it to optimize storage usage effectively. Deduplication, in particular, can significantly reduce space consumption by eliminating duplicate copies of data, although it requires substantial memory resources to function efficiently.

XFS focuses on high-performance allocation strategies designed to minimize overhead and maximize throughput. It uses allocation groups to divide the file system into smaller, manageable sections, allowing multiple operations to occur in parallel without contention. This design improves scalability and ensures that performance remains consistent even as the file system grows.

While XFS does not include built-in compression or deduplication, its efficient handling of large files and sequential data streams makes it highly effective in environments where raw performance is more important than advanced storage optimization. Its approach to space management is straightforward, prioritizing speed and predictability over complex features.

These differing strategies highlight the trade-offs between simplicity, flexibility, and advanced functionality. Some file systems prioritize efficient use of space through compression and deduplication, while others focus on maintaining high performance with minimal overhead. The right choice depends on the specific requirements of the workload and the available system resources.

Data Integrity, Error Handling, and Reliability Mechanisms

Data integrity is one of the most important considerations in modern file system design. As storage devices become larger and more complex, the risk of data corruption increases. File systems must therefore include mechanisms to detect, prevent, and recover from errors to ensure that data remains accurate and reliable over time.

Ext4 uses journaling as its primary method for maintaining consistency. By recording metadata changes before they are applied, it ensures that the file system can recover quickly from unexpected failures. This approach minimizes the risk of corruption during crashes, but it does not provide comprehensive protection against silent data corruption. As a result, ext4 is reliable for general use but may not be sufficient for environments where absolute data integrity is required.

Btrfs addresses this limitation by incorporating checksums for both data and metadata. Every piece of information written to disk is verified when it is read back, allowing the system to detect corruption immediately. If redundancy is configured, Btrfs can also repair corrupted data automatically, providing a higher level of protection compared to traditional file systems.

ZFS takes data integrity even further with its end-to-end checksum model. Every block of data is verified throughout its lifecycle, ensuring that errors are detected and corrected as soon as they occur. This comprehensive approach eliminates many of the weaknesses found in older file systems and provides a robust foundation for reliable data storage.

One of the standout features of ZFS is its self-healing capability. When corruption is detected, the system can retrieve a correct copy of the data from redundant storage and repair the damaged block automatically. This process occurs transparently, without requiring user intervention, making ZFS particularly well-suited for critical applications where data loss is unacceptable.

XFS, while highly reliable in terms of performance and stability, does not include the same level of built-in data integrity features as Btrfs or ZFS. Its reliance on metadata journaling ensures quick recovery from crashes, but it does not actively verify the integrity of stored data. For this reason, XFS is often used in environments where external solutions or hardware-level protections are in place to handle data integrity.

Error handling also varies significantly between file systems. Ext4 and XFS prioritize quick recovery and minimal downtime, making them suitable for systems where availability is more important than advanced error correction. In contrast, Btrfs and ZFS focus on preventing and repairing errors, even if this requires additional processing overhead.

These differences illustrate the importance of aligning file system choice with the level of data protection required. For everyday use, basic reliability may be sufficient, but for mission-critical systems, advanced integrity features become essential.

Scalability and Adaptability in Growing Environments

As data storage needs continue to expand, scalability has become a defining feature of modern file systems. The ability to handle increasing amounts of data without sacrificing performance or reliability is crucial for both individual users and large organizations.

Ext4 offers solid scalability within its design limits. It supports large file sizes and file systems, making it suitable for most standard applications. However, its traditional architecture means that scaling beyond certain thresholds may require manual intervention or migration to a different file system.

Btrfs is designed with scalability in mind, offering features that allow it to grow and adapt بسهولة. Its ability to manage multiple devices within a single file system provides flexibility for expanding storage capacity. Users can add or remove devices dynamically, enabling the system to evolve alongside changing requirements.

ZFS excels in scalability благодаря its storage pool architecture. By abstracting physical storage into a unified pool, it allows for seamless expansion without the need for complex reconfiguration. New devices can be added to the pool, and the system automatically redistributes data to maintain balance and efficiency. This makes ZFS particularly well-suited for large-scale deployments where storage needs are constantly evolving.

XFS also demonstrates strong scalability, particularly in high-performance environments. Its allocation group design allows it to handle large volumes of data efficiently, maintaining consistent performance even as the file system grows. This makes it a reliable choice for applications that require both speed and scalability.

Adaptability is another important aspect of scalability. Modern file systems must be able to accommodate changes in workload, hardware, and storage requirements. Btrfs and ZFS are particularly strong in this area, offering features like snapshots, dynamic allocation, and integrated management tools that simplify the process of adapting to new conditions.

In contrast, ext4 and XFS are more static in their design, requiring careful planning to ensure that they meet future needs. While they are highly reliable within their intended use cases, they may not offer the same level of flexibility as newer file systems.

The ability to scale effectively is not just about handling more data—it is about doing so efficiently and reliably. File systems that can adapt to changing requirements without significant disruption provide a clear advantage in modern computing environments.

Administrative Complexity and Maintenance Considerations

Managing a file system involves more than just initial setup. Ongoing maintenance, monitoring, and optimization are essential to ensure that the system continues to perform well over time. The level of complexity involved varies significantly between different file systems, influencing their suitability for different types of users and environments.

Ext4 is known for its simplicity and ease of use. It requires minimal configuration and works reliably in most scenarios without extensive tuning. Maintenance tasks such as file system checks and repairs are straightforward, making it an excellent choice for users who prefer a low-maintenance solution.

Btrfs introduces additional complexity due to its advanced features. While these features provide significant benefits, they also require a deeper understanding of the file system to use effectively. Tasks such as managing snapshots, balancing storage, and optimizing performance may require more effort compared to simpler systems.

ZFS is often considered the most complex of the four file systems discussed here. Its extensive feature set and integrated management capabilities provide powerful tools for handling storage, but they also demand a higher level of expertise. Proper configuration is essential to achieve optimal performance and reliability, and administrators must be familiar with concepts such as storage pools, redundancy levels, and caching mechanisms.

Despite this complexity, ZFS offers a high degree of automation, which can simplify certain aspects of management. Features like self-healing and automatic data verification reduce the need for manual intervention, allowing administrators to focus on higher-level tasks.

XFS strikes a balance between simplicity and performance. While it does not require as much configuration as Btrfs or ZFS, it still offers advanced capabilities for managing large-scale systems. Its tools for monitoring and maintenance are well-developed, making it a practical choice for environments where performance is a priority.

Maintenance considerations also include long-term stability and support. Ext4 and XFS have a long track record and are widely supported across Linux distributions, ensuring compatibility and reliability. Btrfs, while newer, continues to mature and gain adoption, while ZFS remains a specialized solution often used in environments where its advanced features justify the additional complexity.

Choosing the right file system involves balancing the need for advanced features with the ability to manage and maintain the system effectively. Simpler file systems may be easier to use, but more complex options offer capabilities that can significantly enhance performance and data protection when used correctly.

Real World Deployment Scenarios and Practical Applications

Choosing a file system becomes much clearer when viewed through real-world usage scenarios. Each file system—ext4, Btrfs, ZFS, and XFS—finds its strengths in specific environments, and understanding these practical applications helps align technical capabilities with actual needs.

Ext4 is widely used in everyday computing environments. Personal desktops, laptops, and small servers often rely on it because of its stability and minimal maintenance requirements. It performs consistently across a variety of workloads, including web hosting, software development, and general storage tasks. For users who want a dependable system that works without constant monitoring or tuning, ext4 remains a go-to solution.

In enterprise environments where predictability is critical, ext4 is often chosen for boot partitions and system-critical areas. Its simplicity reduces the risk of unexpected behavior, making it ideal for foundational components of an operating system. Even when other file systems are used for advanced storage needs, ext4 frequently serves as a reliable base layer.

Btrfs is commonly deployed in scenarios that require flexibility and modern features. Development environments benefit greatly from its snapshot capabilities, allowing developers to experiment with changes and roll back instantly if needed. This makes it particularly useful for testing software, managing system updates, and maintaining stable configurations.

Backup systems also benefit from Btrfs due to its efficient snapshot and incremental storage capabilities. Instead of copying entire datasets repeatedly, it stores only the differences between versions, saving both time and storage space. This efficiency makes it suitable for environments where frequent backups are necessary.

ZFS is often found in enterprise storage systems, data centers, and high-capacity servers. Its advanced data protection features make it ideal for applications where data integrity cannot be compromised. Industries such as finance, healthcare, and scientific research rely on ZFS to ensure that their data remains accurate and recoverable at all times.

Another common use case for ZFS is network-attached storage systems. Its ability to manage large storage pools, combined with features like snapshots and replication, makes it a powerful solution for centralized data storage. Organizations that require reliable backup and disaster recovery strategies often turn to ZFS for its robustness and automation capabilities.

XFS is frequently used in performance-critical environments. Media production companies, for example, rely on XFS to handle large video files and high-throughput editing workflows. Its ability to process large amounts of data بسرعة and efficiently makes it well-suited for tasks that involve continuous streaming and heavy input/output operations.

Big data platforms and analytics systems also benefit from XFS. These environments often involve processing massive datasets in parallel, and XFS is designed to handle such workloads without significant performance degradation. Its scalability ensures that it can grow alongside the demands of data-intensive applications.

Virtualization is another area where XFS excels. Hosting multiple virtual machines requires a file system that can handle concurrent operations efficiently, and XFS provides the necessary performance characteristics to support such environments. Its consistent throughput ensures that virtualized systems remain responsive even under heavy load.

Strengths and Limitations Across File Systems

Every file system comes with its own set of strengths and limitations, and understanding these trade-offs is essential for making an informed decision. No single option is perfect for all scenarios, and each design reflects a balance between competing priorities.

Ext4’s greatest strength lies in its reliability and simplicity. It is well-tested, widely supported, and easy to manage. These qualities make it a safe choice for most general-purpose applications. However, it lacks advanced features such as built-in snapshots, compression, and comprehensive data integrity checks. For users who need these capabilities, ext4 may feel limited.

Btrfs offers a rich set of modern features, including snapshots, compression, and data integrity verification. These capabilities make it a versatile option for a wide range of applications. However, its complexity can be a drawback, particularly for users who are not familiar with its advanced features. Certain configurations may require careful tuning to achieve optimal performance and stability.

ZFS stands out for its exceptional data protection and scalability. Its ability to detect and repair corruption automatically provides a level of reliability that few other file systems can match. However, this power comes at the cost of increased resource usage and complexity. Systems running ZFS typically require more memory and careful planning to fully utilize its features.

XFS excels in performance and scalability, particularly in environments that handle large files and high مستويات of parallel processing. Its design allows it to maintain consistent performance even under heavy workloads. On the downside, it does not include advanced data integrity features like those found in Btrfs or ZFS, which may limit its suitability for certain applications.

Another important consideration is ecosystem support. Ext4 and XFS are deeply integrated into most Linux distributions, ensuring broad compatibility and ease of use. Btrfs is increasingly supported and continues to mature, while ZFS often requires additional setup due to licensing differences. These factors can influence deployment decisions, особенно in environments where ease of integration is a priority.

By weighing these strengths and limitations, users can identify the file system that best matches their specific requirements. The decision often involves balancing performance, reliability, and feature set against the complexity of implementation and maintenance.

Performance Characteristics and Workload Optimization

Performance is a key factor in selecting a file system, but it is not a one-size-fits-all metric. Different workloads place different demands on storage systems, and each file system is optimized for specific types of operations.

Ext4 delivers solid all-around performance, making it suitable for a wide range of tasks. It handles small and medium-sized files efficiently and performs well in mixed workloads. Its balanced design ensures that it remains responsive under typical usage conditions, making it a reliable choice for general computing.

Btrfs performance can vary depending on how its features are used. While its copy-on-write mechanism and checksumming provide valuable benefits, they can introduce overhead in certain scenarios. However, features like compression can offset this by reducing the amount of data that needs to be written to disk. With proper configuration, Btrfs can achieve good performance while maintaining its advanced capabilities.

ZFS is designed for high-performance storage environments, but its efficiency depends heavily on available system resources. With sufficient memory and proper tuning, it can deliver excellent performance, particularly in read-heavy workloads. Its caching mechanisms play a significant role in optimizing data access, ensuring that frequently used data is readily available.

XFS is optimized for throughput and parallelism, making it one of the fastest file systems for large-scale data operations. It performs exceptionally well in scenarios involving large files and sequential access patterns. Its ability to handle multiple operations simultaneously ensures that it can keep up with demanding workloads.

Workload optimization involves matching the strengths of a file system to the specific requirements of an application. For example, a database system may benefit from the consistency and reliability of ext4 or the performance of XFS, while a backup system may take advantage of the snapshot capabilities of Btrfs or ZFS.

Understanding how each file system handles different types of workloads allows users to make more informed decisions and achieve better overall performance. The right choice can significantly impact system efficiency and user experience.

Final Thoughts and Overall Comparison

When comparing ext4, Btrfs, ZFS, and XFS, it becomes clear that each file system serves a distinct purpose within the Linux ecosystem. Their differences are not just technical details—they represent fundamentally different approaches to managing data.

Ext4 remains a dependable and straightforward option, offering stability and ease of use for a wide range of applications. It is particularly well-suited for users who value reliability and minimal complexity. While it may not include the most advanced features, it continues to be a solid foundation for many systems.

Btrfs brings modern functionality to the forefront, providing tools for data management, integrity, and flexibility. Its ability to create snapshots, compress data, and verify integrity makes it a powerful choice for users who need more control over their storage environment. As it continues to mature, it is becoming an increasingly viable option for both personal and professional use.

ZFS stands as a premium solution for environments that demand the highest levels of data protection and scalability. Its advanced features and integrated design make it a leader in enterprise storage, particularly where reliability is critical. While it requires more resources and expertise, the benefits it offers can far outweigh these challenges in the right context.

XFS focuses on delivering exceptional performance and scalability, making it ideal for high-demand workloads. Its ability to handle large files and parallel operations ensures that it remains a top choice for performance-driven environments. Although it lacks some advanced data protection features, its speed and efficiency make it invaluable in many scenarios.

Ultimately, the best file system depends on the specific needs of the user. There is no universal answer, only the option that best aligns with the requirements of a given workload. By understanding the strengths and trade-offs of each file system, users can make informed decisions that enhance both performance and reliability.

This comparison highlights the diversity and flexibility of the Linux ecosystem. With multiple powerful file systems available, users have the freedom to choose the solution that best fits their goals, whether that means simplicity, advanced features, maximum performance, or enterprise-grade reliability.