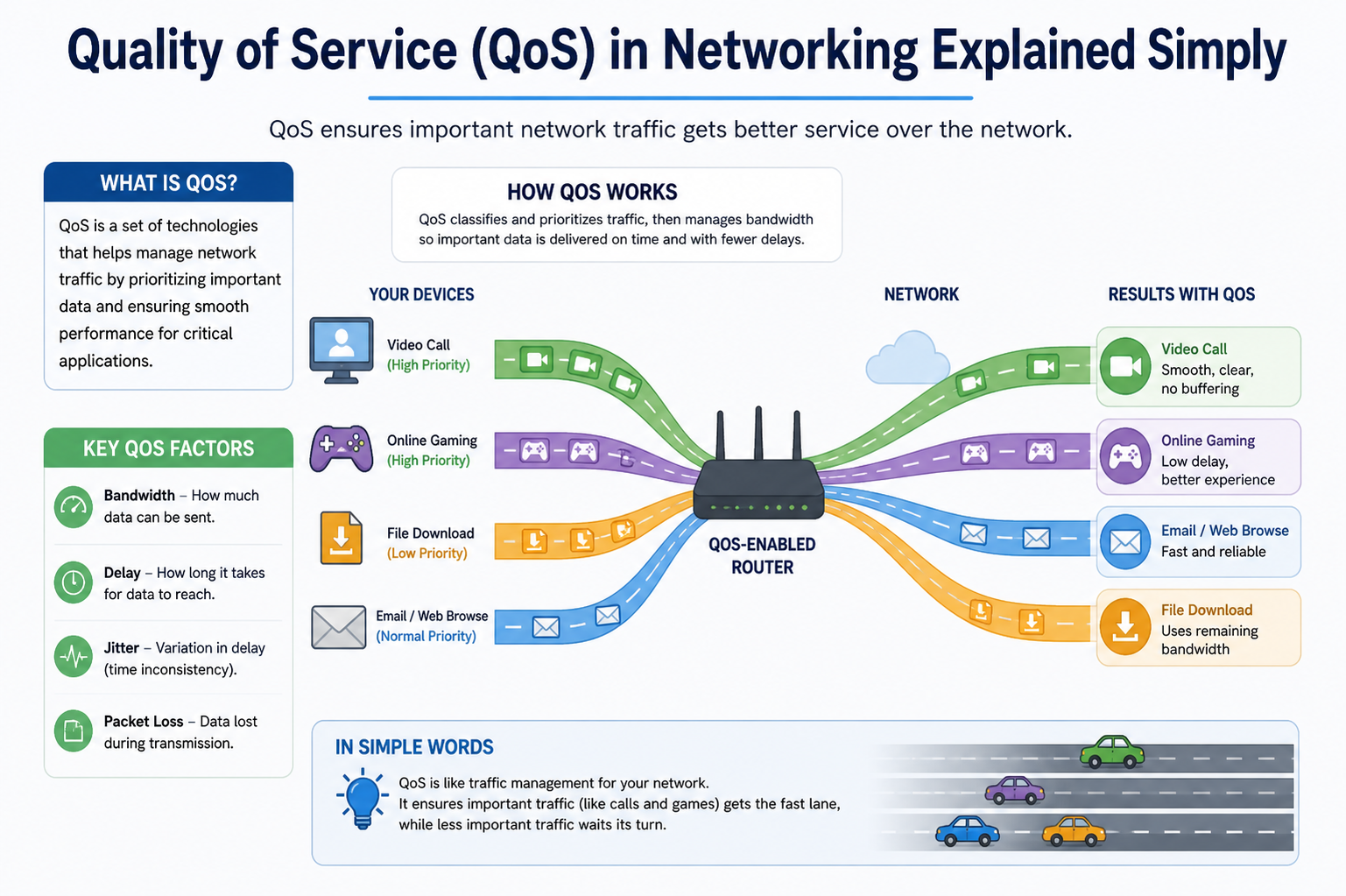

Quality of Service (QoS) in networking refers to a set of technologies and techniques used to manage and prioritize network traffic so that important data gets the performance it requires. In modern digital communication systems, not all data has the same importance or urgency. For example, a live video conference between employees requires smooth, uninterrupted transmission, while downloading a large file in the background can tolerate delays. QoS is designed to balance these different needs by controlling how data packets are handled across a network.

In today’s network environments, data is broken into small units called packets, which travel independently through routers and switches before being reassembled at the destination. Without proper control, these packets may experience delays, arrive out of order, or even get lost during transmission. QoS helps prevent such issues by ensuring that time-sensitive and critical applications receive priority treatment over less urgent traffic. This improves overall network efficiency and user experience.

The importance of QoS has grown significantly with the rise of cloud computing, real-time communication tools, online streaming services, and the Internet of Things (IoT). Networks are no longer used only for basic data transfer; they now carry voice calls, video meetings, financial transactions, sensor data, and many other types of traffic simultaneously. Each of these services has different performance requirements, making QoS a necessary component of modern network design.

Evolution of Network Traffic and the Need for QoS

In earlier networking environments, systems were relatively simple. Organizations often used separate infrastructures for different types of communication. Voice systems operated independently from data networks, and video services were limited or non-existent. This separation reduced complexity because each network handled a specific type of traffic with predictable requirements.

However, modern networks have evolved into unified systems where multiple types of traffic share the same infrastructure. This convergence has introduced new challenges for network administrators. They must now ensure that all types of traffic coexist without affecting each other negatively. For instance, a sudden spike in file downloads should not degrade the quality of ongoing video conferences or VoIP calls.

As a result, QoS has become a critical tool for managing network resources efficiently. It allows administrators to define rules that control how bandwidth is distributed, how packets are prioritized, and how congestion is managed. Without QoS, networks may become unpredictable, leading to poor performance, increased latency, and user dissatisfaction.

The increasing demand for remote work, online education, and digital collaboration tools has further highlighted the importance of QoS. Applications such as virtual meetings and cloud-based services require stable and consistent performance, which can only be achieved through effective traffic management techniques.

Understanding How Data Travels in a Network

To understand QoS, it is important to first understand how data moves across a network. When information is sent from one device to another, it is divided into packets. Each packet contains a portion of the original data along with additional information such as the source address, destination address, and sequence number.

These packets travel through multiple networking devices such as routers and switches. Each device examines the packet and decides where to send it next. Since packets may take different paths to reach the same destination, they may arrive out of order or experience varying delays.

In a simple network with minimal traffic, this process works smoothly. However, in a busy network where many devices are transmitting data simultaneously, congestion can occur. Congestion leads to delays, packet loss, and jitter, all of which negatively impact performance.

QoS addresses these issues by controlling how packets are processed at each stage of their journey. It ensures that important packets are forwarded quickly, while less important traffic may be delayed or queued. This controlled handling of data is what makes QoS essential in complex networking environments.

Key Performance Factors in Network Quality

QoS is built around several key performance factors that determine how well a network operates. These factors help network administrators measure performance and apply appropriate policies to improve efficiency.

Bandwidth and Link Capacity

Bandwidth refers to the maximum amount of data that can be transmitted over a network connection in a given period of time. It is usually measured in bits per second. Higher bandwidth means more data can be transferred simultaneously, resulting in better performance.

However, bandwidth alone does not guarantee good performance. Even high-bandwidth networks can suffer from congestion if too many users try to access resources at the same time. QoS helps manage bandwidth usage by allocating it according to priority levels, ensuring that critical applications receive sufficient capacity.

Throughput and Real-World Performance

Throughput represents the actual amount of data successfully transmitted over a network. While bandwidth is the theoretical maximum capacity, throughput reflects real-world performance after accounting for delays, errors, and retransmissions.

In practical scenarios, throughput is always lower than bandwidth due to network inefficiencies. QoS improves throughput by reducing congestion and optimizing traffic flow, ensuring that available resources are used effectively.

Latency and Network Delay

Latency refers to the time it takes for a data packet to travel from the source to the destination. It is one of the most important factors affecting real-time applications such as voice and video communication.

High latency can cause noticeable delays in conversations, making communication difficult. QoS reduces latency by prioritizing time-sensitive traffic and ensuring that it is processed quickly through the network.

Jitter and Packet Variation

Jitter refers to the variation in packet arrival times. In an ideal network, packets should arrive at consistent intervals. However, due to congestion and varying network paths, delays may occur, causing irregular arrival times.

Jitter is particularly harmful to real-time applications such as VoIP and video streaming, where smooth playback is essential. QoS minimizes jitter by maintaining consistent packet flow and prioritizing delay-sensitive traffic.

Packet Loss and Network Reliability

Packet loss occurs when data packets fail to reach their destination. This can happen due to congestion, hardware failures, or network errors. Lost packets often need to be retransmitted, which increases delay and reduces efficiency.

QoS reduces packet loss by managing congestion and ensuring that high-priority traffic is delivered reliably. This improves overall network stability and performance.

Types of Network Traffic and Their Behavior

Not all network traffic behaves in the same way. Some applications are highly sensitive to delays, while others can tolerate interruptions without major issues. Understanding these differences is essential for effective QoS implementation.

Real-time applications such as video conferencing, voice calls, and online gaming require low latency and minimal jitter. These applications are considered time-sensitive because even small delays can significantly affect performance.

On the other hand, applications such as email, file transfers, and software updates are more flexible. They do not require immediate delivery and can tolerate delays or temporary interruptions without major impact.

QoS categorizes traffic based on its sensitivity and importance. This classification allows the network to treat each type of traffic appropriately, ensuring that critical applications receive the resources they need.

Introduction to QoS Mechanisms in Networking

QoS is not a single technology but a combination of multiple mechanisms working together. These mechanisms control how data is classified, prioritized, and transmitted across the network.

One of the primary functions of QoS is traffic classification. This process involves identifying different types of data and assigning them priority levels. Once classified, traffic is managed according to predefined policies.

Another important mechanism is queuing, which determines the order in which packets are processed. When network congestion occurs, packets are placed into queues based on their priority. High-priority packets are processed first, while lower-priority packets wait their turn.

Bandwidth management is another key component of QoS. It ensures that network resources are distributed fairly and efficiently. By controlling how much bandwidth each application can use, QoS prevents any single application from overwhelming the network.

Together, these mechanisms create a structured system for managing network traffic. They ensure that performance remains stable even under heavy load conditions.

Why QoS is Essential in Modern Networks

Modern networks are highly dynamic and carry a wide variety of traffic types simultaneously. Without QoS, all traffic would be treated equally, leading to unpredictable performance and potential network failures during peak usage.

QoS provides structure and control, allowing administrators to define clear rules for traffic handling. This ensures that critical applications always receive the necessary resources, even when the network is under heavy demand.

In business environments, QoS is especially important because many operations depend on real-time communication and data exchange. Financial transactions, customer support systems, and collaborative tools all require reliable network performance.

As digital transformation continues to expand, the role of QoS will become even more important in maintaining efficient and reliable communication systems across organizations.

Quality of Service Measurement Parameters in Detail

QoS in networking relies heavily on measurable performance indicators that allow administrators to understand how well a network is functioning. These measurements provide a clear picture of traffic behavior and help identify whether applications are receiving the required level of service. Without proper measurement, it would be impossible to determine whether QoS policies are working effectively or whether adjustments are needed.

One of the most fundamental QoS measurements is bandwidth utilization. This refers to how much of the available network capacity is being used at any given time. Monitoring bandwidth usage helps identify congestion points and ensures that no single application consumes excessive resources. In a well-managed QoS environment, bandwidth is distributed according to predefined priorities, ensuring that critical services remain unaffected even during peak usage periods.

Throughput is another important measurement that reflects the actual amount of data successfully delivered over the network. Unlike theoretical bandwidth, throughput accounts for real-world conditions such as packet loss, retransmissions, and delays. A network may have high bandwidth but still experience low throughput if it is poorly optimized. QoS improves throughput by reducing unnecessary congestion and ensuring efficient packet handling.

Latency measurement is also essential in QoS analysis. Latency represents the delay between sending and receiving data packets. Even small increases in latency can significantly affect real-time applications such as voice and video communication. QoS aims to minimize latency by prioritizing time-sensitive traffic and ensuring faster processing at network devices.

Jitter measurement focuses on variations in packet arrival times. When packets arrive at inconsistent intervals, it can lead to poor audio or video quality. Jitter is especially problematic in streaming and VoIP applications. QoS reduces jitter by maintaining a steady flow of packets through controlled scheduling and prioritization techniques.

Packet loss is another critical metric that indicates how many packets fail to reach their destination. High packet loss can severely degrade application performance and lead to retransmissions, which further increase network congestion. QoS mechanisms help reduce packet loss by managing traffic flow and preventing network overload.

Traffic Classification and Marking in QoS

Traffic classification is one of the most important processes in QoS implementation. It involves identifying different types of network traffic and assigning them to specific categories based on their importance and requirements. This allows the network to treat each type of traffic differently according to its needs.

Classification is typically based on several factors, including source and destination IP addresses, protocol types, port numbers, and application behavior. For example, voice traffic may be identified based on specific port ranges used by VoIP applications, while file transfer traffic may be identified using different protocol signatures.

Once traffic is classified, it is marked to indicate its priority level. Marking is done by adding specific values to packet headers, which help network devices understand how to handle each packet. These markings ensure that QoS policies are consistently applied across the entire network.

One common marking method is Differentiated Services Code Point (DSCP), which is used in IP packet headers to define priority levels. Higher DSCP values typically indicate higher priority traffic, while lower values represent less critical data. Network devices read these markings and apply appropriate handling rules.

Traffic classification and marking work together to ensure that important applications receive the necessary resources. Without proper classification, all traffic would be treated equally, leading to inefficiencies and poor performance for critical services.

Queuing Mechanisms in Network Traffic Management

Queuing is a core component of QoS that determines how packets are stored and processed when network congestion occurs. When a network becomes busy and cannot immediately forward all incoming packets, they are placed into queues based on their priority level.

There are different types of queuing mechanisms used in networking, each designed to handle traffic in a specific way. Priority queuing is one of the simplest forms, where high-priority traffic is always processed before lower-priority traffic. This ensures that critical applications experience minimal delay, although it may result in delays for less important traffic.

Weighted queuing is a more balanced approach that assigns different weights to different types of traffic. Instead of completely prioritizing one type over another, it distributes bandwidth proportionally based on predefined rules. This helps maintain fairness while still ensuring that important traffic receives adequate resources.

Fair queuing is another method that aims to provide equal treatment to all traffic flows. It prevents any single application from dominating the network by ensuring that all queues receive a fair share of bandwidth. This method is useful in environments where balanced performance is required across multiple applications.

Queuing plays a crucial role in managing congestion and ensuring smooth data flow. By controlling the order in which packets are transmitted, QoS helps maintain network stability even during heavy traffic conditions.

Bandwidth Management and Traffic Control Techniques

Bandwidth management is another essential aspect of QoS that focuses on controlling how network capacity is distributed among different applications and users. Since network resources are limited, it is important to allocate bandwidth efficiently to prevent congestion and ensure smooth performance.

One common technique used in bandwidth management is traffic shaping. Traffic shaping involves controlling the rate at which data is transmitted into the network. Instead of allowing traffic to flow freely, it regulates the speed of data transmission to match available capacity. This helps prevent sudden spikes in traffic that could lead to congestion.

Another technique is traffic policing, which enforces strict limits on bandwidth usage. If an application exceeds its allocated bandwidth, excess packets may be dropped or marked for lower priority. This ensures that no single application consumes more resources than allowed.

Scheduling algorithms are also used in bandwidth management to determine how packets are transmitted over time. These algorithms decide the order and timing of packet transmission based on priority levels and available resources. Effective scheduling helps maintain consistent performance across all applications.

Bandwidth reservation is another important concept where specific amounts of bandwidth are reserved for critical applications. This ensures that essential services always have access to the required resources, even during periods of heavy network usage.

Together, these techniques help maintain a balanced distribution of network resources, ensuring that all applications receive appropriate levels of service based on their importance.

QoS Models and Traffic Handling Approaches

QoS models define how traffic is managed and prioritized within a network. Different models offer different levels of service quality, depending on the requirements of the network environment.

One of the simplest models is the best-effort approach. In this model, all traffic is treated equally without any prioritization. There are no guarantees regarding delivery, delay, or bandwidth. While this model is easy to implement, it is not suitable for environments that require consistent performance.

A more advanced model is differentiated services, which classifies traffic into different priority levels. Each class is treated differently based on its requirements. High-priority traffic receives better treatment, while lower-priority traffic is handled on a best-effort basis. This model is widely used because it provides flexibility and scalability.

Integrated services represent a more strict approach to QoS. In this model, network resources are reserved in advance for specific applications. This ensures guaranteed performance levels for critical services. However, it requires more complex configuration and network-wide support.

Each QoS model offers different advantages depending on the use case. Organizations choose models based on their performance requirements, network size, and application types.

Role of Packet Handling and Prioritization in QoS

Packet handling is a fundamental part of QoS that determines how individual data packets are processed as they move through the network. Each packet carries information that helps network devices decide how it should be treated.

Prioritization is applied based on the classification and marking of packets. High-priority packets are processed faster and placed at the front of transmission queues. This ensures that time-sensitive data reaches its destination with minimal delay.

In addition to prioritization, packet scheduling plays an important role in ensuring efficient delivery. Scheduling determines when each packet is transmitted based on available bandwidth and priority rules. Proper scheduling helps prevent congestion and ensures smooth data flow.

Network devices continuously analyze incoming packets and apply QoS rules dynamically. This real-time processing ensures that changes in traffic conditions are handled efficiently without disrupting ongoing communication.

Importance of Congestion Management in QoS Systems

Congestion occurs when network traffic exceeds the available capacity, leading to delays, packet loss, and reduced performance. QoS plays a critical role in managing congestion by controlling how traffic flows through the network.

When congestion is detected, QoS mechanisms automatically adjust traffic handling rules to reduce network load. This may include delaying lower-priority traffic, reducing transmission rates, or dropping non-essential packets.

Congestion management ensures that critical applications continue to function smoothly even during peak traffic periods. It also helps maintain overall network stability by preventing overload conditions.

Effective congestion control requires continuous monitoring of network conditions and dynamic adjustment of QoS policies. This adaptive approach allows networks to respond quickly to changing traffic patterns and maintain consistent performance levels.

Advanced Queuing Strategies in QoS Networks

In modern networking environments, simple first-come-first-served packet handling is not sufficient to maintain performance for diverse applications. Advanced queuing strategies are therefore used to intelligently manage how packets are stored, prioritized, and transmitted when network congestion occurs. These strategies are designed to balance fairness, efficiency, and priority-based delivery, ensuring that critical services remain stable even under heavy load conditions.

One widely used approach is weighted queuing, where different types of traffic are assigned different importance levels. Instead of allowing one type of traffic to dominate the network, weighted queuing distributes bandwidth in a controlled manner. High-priority applications such as voice communication or video conferencing receive more frequent transmission opportunities, while lower-priority traffic is still allowed to pass but at a reduced rate. This creates a balanced environment where all applications can function without completely blocking each other.

Another important strategy is class-based queuing, where traffic is divided into predefined classes. Each class represents a group of applications with similar performance requirements. For example, real-time communication tools may be placed in a high-priority class, while background updates or file transfers are placed in lower classes. Each class is then assigned a portion of available bandwidth, ensuring predictable performance.

Low-latency queuing is specifically designed for time-sensitive traffic. It ensures that delay-sensitive packets are always processed before others. This is particularly important for applications like VoIP and live streaming, where even small delays can significantly affect quality. However, careful configuration is necessary to avoid starving lower-priority traffic.

Round-robin queuing is another method that provides fairness by cycling through queues in sequence. Each queue is given a chance to transmit packets in turn, preventing any single queue from being ignored. Weighted round-robin enhances this concept by giving certain queues more opportunities based on their assigned weight.

These advanced queuing strategies are often combined within a single QoS system to achieve more precise traffic control. By using multiple methods together, networks can adapt to varying traffic conditions while maintaining consistent performance across different applications.

Deep Dive into Bandwidth Allocation Techniques

Bandwidth allocation is a core function of QoS that determines how much network capacity is assigned to different applications, users, or services. Since network resources are limited, effective allocation is necessary to prevent congestion and ensure smooth performance.

Static bandwidth allocation assigns fixed portions of bandwidth to specific applications or departments. This method is simple and predictable, making it suitable for environments with stable traffic patterns. However, it can lead to inefficiency if allocated bandwidth is not fully utilized.

Dynamic bandwidth allocation, on the other hand, adjusts resource distribution based on real-time network conditions. When some applications are not using their full allocation, unused bandwidth can be temporarily reassigned to other active services. This improves overall efficiency and ensures better utilization of available resources.

Minimum bandwidth guarantees are often used to ensure that critical applications always have access to a baseline level of performance. Even if the network is congested, these applications will still receive their required share of bandwidth. This is particularly important for business-critical systems that must remain operational at all times.

Maximum bandwidth limits are also applied to prevent any single application from consuming excessive network resources. By setting upper limits, QoS ensures that no service can overwhelm the network and degrade performance for others.

Bandwidth borrowing is another advanced technique where applications can temporarily use unused bandwidth from other services. This allows for flexible resource usage while still maintaining priority rules. When higher-priority traffic becomes active, borrowed bandwidth is immediately reclaimed.

These allocation techniques work together to create a controlled and efficient network environment where resources are distributed fairly and intelligently based on application needs.

Traffic Shaping and Its Role in Network Stability

Traffic shaping is a QoS technique used to control the rate at which data is transmitted into the network. Instead of allowing traffic to flow at uncontrolled speeds, traffic shaping smooths out bursts of data to maintain a steady transmission rate. This helps prevent congestion and improves overall network stability.

One of the main benefits of traffic shaping is its ability to reduce sudden spikes in network usage. When multiple devices send large amounts of data at the same time, congestion can quickly occur. Traffic shaping prevents this by buffering excess packets and releasing them gradually.

Another important aspect of traffic shaping is its role in maintaining predictable network performance. By controlling data flow, it ensures that applications receive consistent bandwidth over time. This is especially important for real-time services that require stable transmission conditions.

Traffic shaping also improves fairness in shared network environments. It prevents aggressive applications from consuming excessive bandwidth and negatively impacting other users. By regulating transmission rates, it ensures that all traffic types are treated appropriately.

In addition, traffic shaping can be applied at different levels of the network, including routers, switches, and edge devices. This allows for flexible implementation depending on network design and requirements.

Overall, traffic shaping plays a critical role in maintaining smooth and reliable network performance, especially in environments with high traffic variability.

Traffic Policing and Enforcement Mechanisms

Traffic policing is another important QoS mechanism that enforces strict rules on network traffic behavior. While traffic shaping smooths out data flow, traffic policing focuses on enforcing limits and taking action when those limits are exceeded.

When a traffic flow exceeds its assigned bandwidth limit, policing mechanisms may drop excess packets immediately or mark them for lower priority handling. This ensures that network policies are strictly enforced and that no application can exceed its allocated resources.

Traffic policing is often used at network entry points where traffic first enters a controlled environment. By applying rules early in the transmission process, it prevents excessive traffic from spreading through the network.

One key advantage of traffic policing is its simplicity and efficiency. It does not require buffering or delaying packets, making it suitable for high-speed networks where immediate enforcement is necessary.

However, because policing can result in packet drops, it must be carefully configured to avoid negatively impacting critical applications. In many cases, it is combined with traffic shaping to create a balanced approach that both controls and smooths traffic flow.

Together, traffic shaping and policing provide a comprehensive framework for managing bandwidth usage and maintaining network performance under varying conditions.

QoS in Real-Time Communication Systems

Real-time communication systems such as voice calls, video conferencing, and live streaming are highly sensitive to network performance. Even small delays or disruptions can significantly degrade user experience. QoS plays a vital role in ensuring that these systems operate smoothly and reliably.

Voice communication requires extremely low latency to maintain natural conversation flow. If delays are too high, conversations become fragmented and difficult to follow. QoS ensures that voice packets are given the highest priority so they can be delivered quickly and consistently.

Video conferencing has similar requirements but also depends heavily on bandwidth and jitter control. High-quality video requires a steady stream of data to maintain smooth playback. QoS helps by reducing packet variation and ensuring consistent delivery rates.

Live streaming services also rely on QoS to prevent buffering and interruptions. By prioritizing streaming traffic and managing bandwidth effectively, QoS ensures that users experience continuous playback without frequent pauses.

In addition to prioritization, QoS also helps in managing network congestion during peak usage times. When multiple users access real-time services simultaneously, QoS ensures that critical traffic is still delivered with minimal disruption.

These capabilities make QoS essential for modern communication platforms that depend on real-time data transmission.

Impact of Network Congestion on Application Performance

Network congestion occurs when the demand for bandwidth exceeds available capacity. This can lead to a range of performance issues, including increased latency, packet loss, and reduced throughput. Understanding the impact of congestion is essential for effective QoS design.

When congestion occurs, packets may be delayed as they wait in queues for transmission. This increases latency and can severely affect real-time applications. In severe cases, packets may be dropped entirely, leading to retransmissions and further increasing network load.

Congestion also affects application responsiveness. Users may experience delays when loading web pages, downloading files, or interacting with cloud-based applications. This can reduce productivity and negatively impact user satisfaction.

In streaming applications, congestion often results in buffering, reduced video quality, or interrupted playback. For voice communication, it can lead to distorted audio or dropped calls.

QoS addresses these issues by prioritizing traffic and managing congestion proactively. By controlling how packets are handled during high traffic conditions, QoS helps maintain stable performance even when the network is under pressure.

Role of Network Devices in QoS Implementation

Network devices such as routers and switches play a central role in implementing QoS policies. These devices are responsible for analyzing incoming traffic, applying classification rules, and enforcing prioritization mechanisms.

Routers typically handle QoS at the network layer, making decisions about how packets should be forwarded based on their priority levels. They use routing tables and QoS policies to ensure that high-priority traffic is processed efficiently.

Switches often implement QoS at the data link layer, focusing on traffic within local networks. They can assign priority levels to different ports or VLANs, ensuring that internal traffic is managed effectively.

Firewalls and edge devices also contribute to QoS by enforcing traffic rules at network boundaries. They can block, prioritize, or shape traffic before it enters the main network infrastructure.

Together, these devices form a coordinated system that ensures QoS policies are consistently applied across the entire network path.

Importance of Policy Definition in QoS Systems

QoS effectiveness depends heavily on well-defined policies that clearly specify how different types of traffic should be handled. These policies are typically based on business requirements and application needs.

Policy definition involves identifying critical applications, determining their performance requirements, and assigning appropriate priority levels. This process requires collaboration between network administrators and business stakeholders.

Clear policies help ensure consistency in traffic handling and prevent conflicts between different applications. They also provide a framework for monitoring and adjusting QoS settings as network conditions change.

Without proper policies, QoS systems can become ineffective or misconfigured, leading to poor performance and inefficient resource usage.

QoS in Modern Enterprise and Cloud Networks

In modern enterprise environments, Quality of Service has become a core requirement rather than an optional enhancement. Organizations now rely heavily on cloud-based applications, remote collaboration tools, and real-time communication platforms. These systems generate highly diverse traffic patterns that must be managed carefully to maintain performance and reliability.

In enterprise networks, QoS is often implemented across multiple layers, including local networks, wide area networks, and cloud connectivity points. This ensures consistent performance regardless of where applications are hosted or accessed. For example, employees working remotely using cloud-based tools still require the same level of performance as those working inside the office network.

Cloud computing introduces additional complexity because resources are shared across multiple users and locations. QoS helps ensure that each user or application receives fair and appropriate access to network resources. Without QoS, cloud services may experience unpredictable performance due to shared infrastructure limitations.

In large organizations, different departments often have different network requirements. Finance systems may require high reliability and low latency for transaction processing, while marketing teams may prioritize video conferencing and content sharing. QoS allows network administrators to create policies that match these varying needs within a single infrastructure.

Virtualization has also increased the importance of QoS. Virtual machines and containers often share the same physical hardware, meaning network traffic must be carefully controlled to prevent resource contention. QoS ensures that critical virtual workloads are prioritized over less important background processes.

Overall, QoS plays a vital role in ensuring that enterprise and cloud networks remain efficient, scalable, and capable of supporting modern digital operations.

Role of Service Level Agreements in QoS Management

Service Level Agreements (SLAs) are formal commitments between service providers and organizations that define expected levels of network performance. These agreements are closely tied to QoS because they establish measurable performance targets that QoS systems must support.

SLAs typically define parameters such as minimum bandwidth, maximum latency, acceptable packet loss, and uptime guarantees. These metrics are used to evaluate whether a network is meeting its performance obligations. QoS mechanisms are then configured to ensure that these targets are consistently achieved.

In business environments, SLAs are essential for maintaining accountability. They ensure that both internal IT teams and external service providers deliver reliable network performance. If performance falls below agreed thresholds, corrective actions may be required.

QoS plays a direct role in enforcing SLA requirements by prioritizing traffic and managing network resources. For example, if a critical application has a strict latency requirement defined in an SLA, QoS ensures that its traffic is always prioritized over less critical data.

In cloud services, SLAs are particularly important because customers depend on shared infrastructure. QoS helps cloud providers meet these agreements by managing traffic across multiple tenants and workloads.

By aligning QoS policies with SLAs, organizations can ensure predictable performance and maintain trust between service providers and users.

Monitoring and Performance Optimization in QoS Networks

Continuous monitoring is essential for maintaining effective QoS in any network environment. Without monitoring, administrators cannot determine whether QoS policies are working as intended or whether adjustments are needed.

Network monitoring tools track key performance indicators such as latency, jitter, packet loss, and bandwidth usage. These metrics provide real-time insights into network behavior and help identify potential issues before they impact users.

Performance optimization involves analyzing monitoring data and adjusting QoS policies accordingly. For example, if a particular application is experiencing high latency, its priority level may be increased or additional bandwidth may be allocated to it.

Historical data is also useful for identifying long-term trends in network usage. This information helps administrators plan capacity upgrades and refine QoS strategies over time.

In addition to manual monitoring, many modern systems use automated tools that dynamically adjust QoS settings based on network conditions. These intelligent systems can respond quickly to changes in traffic patterns, ensuring consistent performance without constant human intervention.

Effective monitoring and optimization ensure that QoS remains aligned with evolving network demands and business requirements.

Challenges in Implementing QoS in Complex Networks

Although QoS provides significant benefits, implementing it in complex networks is not without challenges. One of the main difficulties is accurately identifying and classifying traffic. With thousands of applications running across modern networks, distinguishing between different types of traffic can be complex.

Encryption adds another layer of difficulty. Many modern applications encrypt their traffic, making it harder for network devices to inspect packet contents and determine priority levels. This requires more advanced classification techniques based on behavior rather than content.

Scalability is also a challenge in large networks. As the number of users and devices increases, maintaining consistent QoS policies across the entire infrastructure becomes more difficult. Policies must be carefully designed to scale efficiently without creating bottlenecks.

Another challenge is configuration complexity. QoS requires precise configuration of multiple parameters, including bandwidth limits, priority levels, and queuing rules. Incorrect configuration can lead to performance issues or unintended traffic behavior.

Interoperability between different network devices can also cause issues. Not all hardware and software platforms implement QoS in the same way, which can lead to inconsistencies in traffic handling across the network.

Despite these challenges, careful planning and proper implementation can help organizations successfully deploy QoS in even the most complex environments.

Security Considerations in QoS Environments

Security and QoS often work together in modern networks, but they must be carefully balanced. While QoS focuses on performance, security focuses on protecting data and preventing unauthorized access.

One potential issue is that malicious traffic could be incorrectly classified as high priority if QoS policies are not properly configured. This could allow harmful traffic to consume valuable network resources.

Firewalls and intrusion detection systems often work alongside QoS mechanisms to ensure that only legitimate traffic receives priority treatment. These systems help identify and block suspicious activity before it affects network performance.

Encryption also plays a dual role in security and QoS. While it protects data from unauthorized access, it can make traffic classification more difficult. Network administrators must use advanced techniques to manage encrypted traffic effectively.

Security policies must also consider QoS priorities to avoid conflicts. For example, security scans or backups should not interfere with critical real-time applications.

By integrating security and QoS strategies, organizations can ensure both high performance and strong protection across their networks.

Future Trends in Quality of Service Technologies

The future of QoS is closely linked to advancements in networking technologies such as 5G, artificial intelligence, and edge computing. These technologies are expected to significantly increase the demand for intelligent traffic management systems.

5G networks, for example, require highly advanced QoS mechanisms to support ultra-low latency applications such as autonomous vehicles, augmented reality, and remote surgery. These applications demand extremely precise traffic control and near-instant data delivery.

Artificial intelligence is also being used to enhance QoS by enabling predictive traffic management. AI systems can analyze network patterns and automatically adjust QoS policies before congestion occurs. This proactive approach improves efficiency and reduces manual intervention.

Edge computing is another important trend that impacts QoS. By processing data closer to the source, edge networks reduce latency and improve performance. QoS ensures that traffic between edge devices and central systems is managed efficiently.

Software-defined networking (SDN) is also transforming QoS by allowing centralized control of network behavior. This makes it easier to implement and adjust QoS policies dynamically across large-scale networks.

As networks continue to evolve, QoS will remain a fundamental technology for ensuring reliable and efficient communication.

Final Conclusion

Quality of Service is a critical component of modern networking that ensures data is delivered efficiently, reliably, and according to priority. It enables networks to handle diverse traffic types while maintaining stable performance for essential applications.

Through mechanisms such as classification, queuing, bandwidth management, and traffic shaping, QoS provides structured control over how data flows across a network. It helps prevent congestion, reduce delays, and improve overall user experience.

In both enterprise and cloud environments, QoS plays a key role in supporting real-time communication, business applications, and digital services. It ensures that critical operations continue to function smoothly even under heavy network load.

As technology continues to advance, QoS will remain essential for managing increasingly complex and high-demand networks.